KEY TAKEAWAY

KEY TAKEAWAYChoosing the right data transformation tool depends on your technical expertise, data volume, and cloud environment. Here is a quick breakdown by category:

- Automated and Managed: Hevo Data for zero-infrastructure ELT, Matillion for cloud warehouse-native pipelines, Fivetran for fully automated zero-maintenance data movement

- Enterprise: Informatica for AI-driven multi-cloud ETL, Talend for data quality and governance, AWS Glue for serverless ETL on AWS, Alteryx for no-code data preparation

- Open Source: dbt for SQL-first warehouse transformations, Airbyte for flexible data movement with 600+ connectors

- Cloud-Native: Google BigQuery Dataform for governed SQL transformations natively inside BigQuery

- Custom: Python for full control over complex transformation logic

When to choose: Use Hevo, Matillion, or Fivetran to eliminate pipeline overhead. Use dbt or Dataform for warehouse-native SQL. Use Alteryx or Informatica for enterprise no-code workflows. Use Python or Airbyte when flexibility matters more than ease of setup.

Raw data is rarely useful on its own. It arrives in different formats, from different systems, with inconsistent naming conventions, missing values, and duplicate records. Before your analytics team can trust it, someone has to clean it, reshape it, and make it consistent. That is what data transformation tools do.

The challenge is that no two teams have the same stack, the same skill set, or the same definition of “analysis-ready.” Some need a no-code platform that runs without engineering support. Others need a SQL-first framework that fits into an existing warehouse workflow. And some need an enterprise-grade solution with governance, lineage, and compliance built in.

This guide covers the 12 best data transformation tools in 2026, what each one does best, and how to pick the right one for your team.

Table of Contents

A Quick Comparison: Top 12 Data Transformation Tools

Here’s a summary of the top 12 tools:

| Tool | Core Feature | Best For | Pricing |

| Hevo Data | No-code, fully managed ELT with fault-tolerant architecture and real-time sync | Zero-infrastructure ELT with predictable pricing | Free tier available. Starts at $239/month |

| Matillion | Cloud-native ELT with push-down architecture and low-code pipeline designer | Cloud warehouse-native transformation on Snowflake, Redshift, BigQuery | Consumption-based pricing |

| AWS Glue | Serverless Spark-based ETL with built-in data catalog and visual job designer | Teams building ETL within the AWS ecosystem | Pay-as-you-go |

| Talend | Low-code pipeline designer with built-in data quality, profiling, and lineage | Enterprise data quality and governance across hybrid environments | Custom pricing |

| Informatica | AI-powered CLAIRE engine for automated mapping, lineage, and quality | Large-scale enterprise ETL across multi-cloud environments | Quote-based |

| Alteryx | Drag-and-drop workflow designer with predictive and spatial analytics | No-code data preparation accessible to business analysts | Starts at $4,950/user/year |

| dbt | SQL-first warehouse-native transformations with testing and lineage | Analytics engineers building modular, version-controlled transformations | Free open-source. Cloud from $100/user/month |

| Airbyte | 600+ open-source connectors with self-hosted or cloud deployment | Flexible, open-source data movement with full infrastructure control | Free self-hosted. Cloud usage-based |

| Fivetran | Fully automated ELT with 700+ connectors and automatic schema drift handling | Zero-maintenance data movement into cloud warehouses | MAR-based pricing. Free trial available |

| Google BigQuery Dataform | SQLX templating with dependency resolution and native BigQuery integration | SQL-first governed transformations natively inside BigQuery | Free. Pay for BigQuery compute only |

| Python | Full programming flexibility with pandas, Polars, PySpark, and 400K+ packages | Custom, complex transformation logic with full control | Free and open-source |

| Apache NiFi | Visual flow-based pipeline design with real-time back-pressure and provenance | Real-time, flow-based data routing and lightweight transformation | Free and open-source |

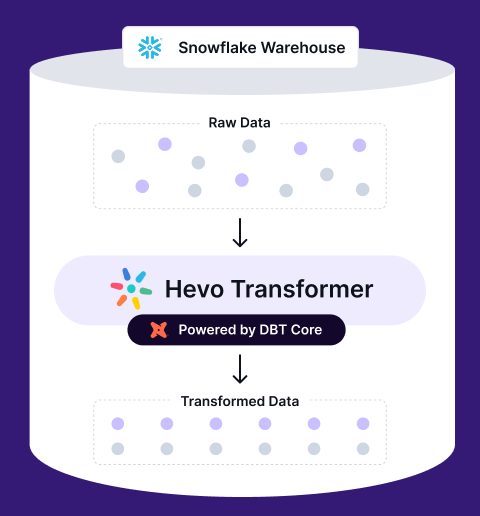

Transform, automate, and optimize your Snowflake data with Hevo Transformer—the ultimate no-code tool for dbt workflows. Experience lightning-fast integrations, real-time previews, and seamless version control with Git. Get results in minutes, not hours.

🚀 Instant Integration – Connect and start transforming in minutes

⚡ Effortless dbt Automation – Build, test, and deploy with a click

🔧 Git Version Control – Collaborate and track changes effortlessly

⏱️ Real-Time Data Previews – See and push updates in an instant

Top 12 Data Transformation Tools

1. Hevo Data

Hevo Data is a simple, reliable, and transparent ELT solution that turns raw data into analysis-ready tables without requiring code or engineering overhead. Its no-code pipelines automate end-to-end extraction, loading, and transformation, making it easy for teams to move data at scale without managing scripts or infrastructure.

Hevo’s reliability comes from its fault-tolerant architecture, automatic schema handling, and Smart Assist monitoring, which proactively detects issues before they impact analytics. Its transparent, event-based pricing and On-Demand Credits ensure predictable costs and uninterrupted pipeline runs.

Paired with Multi-Workspace and Multi-Region support and 150+ prebuilt connectors, Hevo stands out as an ideal contender for teams that want fast, trustworthy, and maintenance-free ELT.

Key Features

- Simple to Use: Hevo removes engineering bottlenecks with a no-code Visual Flow Builder that lets you drag, drop, and map data in real time. You can build and test logic gradually using Draft Pipelines, so workflows move to production faster without writing any code.

- Reliable: Hevo delivers accurate data using log-based CDC and automated schema handling that adapts as your sources change. With built-in deduplication and fault-tolerant retries, pipelines stay stable and self-healing even when schemas evolve.

- Transparent: You can stay in control with flexible replication that lets you include or skip tables and fields instantly. Hevo’s real-time dashboards and detailed logs show exactly how data moves, making your warehouse a trusted single source of truth.

- Predictable Pricing: Hevo charges only for records that are successfully loaded, with no surprise costs during backfills or edits. This clear, event-based pricing makes it easy to forecast spend as your data grows.

- Scalable: Built for scale, Hevo processes billions of records across 150+ sources with low latency and high reliability. Advanced transformations and a performance-first design ensure your pipelines stay fast as complexity increases.

Pricing

- Free Plan: Up to 1 million events per month.

- Usage-Based: Pay as you scale beyond the free quota.

- Flexible Scaling: Add workspaces, regions, or event capacity as needed.

Pros

- Friendly for non-engineers

- Setup takes less than an hour

- Fully managed, no-code ELT for fast data availability.

- Smart Assist and On-Demand Credits minimize downtime and keep pipelines reliable.

- Multi-Workspace and Multi-Region enable seamless scaling and organization.

- Embedded Python allows for advanced transformations when needed.

Cons

- Costs can rise at very high data volumes.

- Custom scripting is limited compared to fully developer-focused tools.

Case Study

Postman used Hevo to automate reporting across teams. They brought together data from product, marketing, and support without involving engineers for every pipeline tweak.

2. Matillion

Matillion’s Data Productivity Cloud is a cloud-native integration and transformation platform built for warehouses such as Snowflake, Amazon Redshift, Google BigQuery, and Databricks. Leveraging a push-down ELT architecture, it loads raw data first and then executes the SQL it generates, along with any custom Python code, directly on the warehouse engine.

A low-code drag-and-drop Designer with dozens of pre-built components, optional hand-written SQL or Python, and new AI-assisted transformer nodes allows data engineers and analysts to collaboratively build, orchestrate, and monitor pipelines that convert raw feeds into trusted, analysis-ready models at cloud scale.

Matillion is best suited for data engineers and technically-skilled data analysts who are responsible for building and maintaining structured, reliable data pipelines. It thrives in organizations that have already invested in a cloud data warehouse and need a robust tool to manage the transformation layer.

Key Features

- Pre-built Connectors: Matillion offers a vast library of pre-built connectors for various data sources, simplifying the data ingestion process.

- ELT Capabilities: The platform supports Extract, Load, and Transform (ELT) processes, allowing data transformations to occur within the target data warehouse for improved performance.

- Scalability: Matillion’s architecture is designed to handle large volumes of data, ensuring scalability as data needs grow.

- User-Friendly Interface: With its intuitive drag-and-drop interface, users can design complex data workflows without extensive coding knowledge.

- Real-Time Data Processing: Matillion supports real-time data transformation and loading, ensuring your data is always up-to-date.

Pricing

Matillion offers a consumption-based pricing model, allowing users to pay for the resources they utilise. This flexible approach helps organisations manage costs effectively.

Pros

- Easy to use with a minimal learning curve.

- Strong integration with major cloud platforms.

- Scalable to handle large data volumes.

- Active community and responsive support.

Cons

- Some users have reported challenges with error handling and debugging.

- The platform may require additional customisation for complex transformations.

What People Say

Enterprise Transformation Tools (COTS)

- These tools are powerful software designed for big businesses.

- They must perform extensive data transformations, usually to centralize and store data in a data warehouse.

- These tools require minimal setup and configuration, and they help you to quickly turn raw data into valuable insights without a lot of extra work.

3. Fivetran

Fivetran is a fully managed ELT platform that automates data movement from source systems into cloud data warehouses. It is best suited for data engineering teams that want reliable, zero-maintenance pipelines without building or managing custom connectors.

What makes Fivetran stand out is its automation-first architecture. Once set up, it handles schema changes, incremental syncs, and retries automatically, so teams focus on transformation and analytics instead of pipeline upkeep.

Key Features

- 700+ Pre-Built Connectors: Replicate data from databases, SaaS apps, cloud storage, and event streams without building custom integration code

- Automatic Schema Drift Handling: Detects and adapts to source schema changes automatically, keeping pipelines running without manual fixes

- Incremental Sync with CDC: Change data capture ensures only new and updated records are synced, reducing load times and compute costs

- Built-in dbt Integration: Trigger dbt transformation runs automatically after each sync completes for a fully automated ELT workflow

Pricing

- Free trial available for 14 days

- Starter: Based on Monthly Active Rows (MAR)

- Enterprise: Custom pricing with advanced security and governance features

Pros

- Zero-maintenance pipelines that self-heal from schema changes

- 700+ connectors cover virtually every modern data source

- Enterprise-grade security including SOC 2, GDPR, and HIPAA compliance

Cons

- Less flexibility for highly custom pipeline logic

- MAR-based pricing can become expensive at high data volumes

- Limited built-in transformation capabilities, relies on dbt or warehouse tools

4. AWS Glue

AWS Glue is Amazon’s fully managed, serverless data-integration service that discovers, prepares, and transforms petabyte-scale data entirely inside the AWS cloud; it provisions Apache Spark clusters on demand, executes PySpark or Scala code you author, and then writes clean, analysis-ready results back to Amazon S3, Redshift, or Athena without any infrastructure to manage.

The service revolves around the Glue Data Catalog: crawlers scan sources such as S3, JDBC stores, and streaming feeds to infer schemas and store them as searchable metadata. Recent additions including the drag-and-drop Glue Studio interface and Amazon Q Data Integration, which turns natural-language prompts into runnable Spark jobs.

AWS Glue is best suited for data engineers and developers who are proficient in scripting with PySpark or Scala and are building solutions within the AWS ecosystem. It is an excellent choice for organizations that need to run scalable, event-driven, or scheduled transformation jobs without the overhead of managing servers.

Key Features

- Serverless Autoscaling ETL Engine: Glue automatically spins Spark workers up and down during job execution, so you only pay for the compute you use and never have to manage capacity.

- Central Data Catalog & Crawlers: Built-in crawlers scan S3 buckets and other data stores to infer schemas and populate a unified catalog, ensuring consistent metadata across all jobs.

- Glue Studio Visual Designer: A low-code, drag-and-drop interface generates PySpark or Scala automatically, enabling users to build complex transformations without deep Spark expertise.

- Amazon Q Data Integration Assistant: Describe your intent in plain English and Glue generates ready-to-run Spark code, helping you develop and deploy faster.

- Job Scheduling, Triggers & Monitoring: Native triggers can launch jobs on demand, on a schedule, or in response to upstream events, while CloudWatch provides detailed performance metrics and alerts.

Pricing

- Pay-as-you-go pricing based on usage

- No upfront costs or long-term commitments

Pros

- Fully managed, serverless infrastructure

- Easy integration with other AWS services

- Automated data cataloguing and schema handling

Cons

- Limited flexibility outside of the AWS environment

- Costly if workflows are poorly optimised

- Debugging jobs can be difficult due to abstracted infrastructure

What People Say

5. Talend

Talend, now branded Talend Data Fabric is a cloud-independent data integration platform that weaves data quality and governance directly into every transformation job. Talend’s primary development environment is a low-code, graphical interface where users design transformation workflows by connecting pre-built components for tasks like data cleansing, enrichment, and complex mapping.

A low-code Studio and browser-based Pipeline Designer let teams ingest, cleanse, map, and enrich data across on-prem, multi-cloud, and hybrid environments, then push native SQL, Spark, or ELT logic down to the target engine for scale.

Enterprises choose Talend when they must prove that every column feeding analytics or AI is profiled, lineage-tracked, and compliant whether the pipeline runs in nightly batch mode or as a real-time stream.

Key Feature

- Low-code Studio & Pipeline Designer: Drag-and-drop components such as joins, filters, Spark, and Python compile into warehouse-native SQL or Spark jobs, reducing pipeline build time for both ETL and ELT.

- Built-in Data Quality & Profiling: Automated rules, standardization, and the Talend Trust Score validate and score every dataset as it flows through the pipeline.

- Central Metadata Catalog & Lineage: A unified catalog indexes assets and captures column-level lineage, enabling auditors to trace any field back to its source.

- Cloud-agnostic & Hybrid Deployment: Run the same pipeline across AWS, Azure, GCP, Databricks, Spark clusters, or on-premises without rewriting code, avoiding vendor lock-in.

- Talend Trust Score: A built-in reliability metric appears alongside every dataset, helping stakeholders assess data readiness at a glance.

Pricing

Talend offers a range of pricing options, including:

- Talend Cloud: Subscription-based pricing tailored to organisational needs.

- Enterprise Edition: Custom pricing based on specific requirements and scale.

Pros

- Comprehensive data integration and quality tools.

- Scalable for large enterprise environments.

- Strong community and support resources.

Cons

- It can be complex for new users or small teams without dedicated data engineering support.

- Cost structure may be unclear due to custom pricing.

- Performance tuning requires expertise, especially for large datasets.

- The interface and UI could be more intuitive for frequent users.

What People Say

6. Informatica

Informatica’s Intelligent Data Management Cloud (IDMC) is an enterprise-grade platform that ingests, standardizes, and reshapes massive data volumes across hybrid and multi-cloud environments, then pushes the work down to warehouse or Spark engines so transformation happens close to the data.

At the core is CLAIRE, an AI engine that studies unified metadata to suggest mappings, tune performance, and automate repetitive tasks, keeping pipelines efficient even at the petabyte scale. A built-in catalog, end-to-end lineage, data-quality profiling, and granular security controls wrap every job in governance, giving large organizations the auditability and policy enforcement they need for regulated, mission-critical workloads.

Informatica is best suited for large enterprises and organizations with mature data practices and stringent security and compliance requirements. Its primary users are typically IT departments, data engineering teams, and data governance specialists who are responsible for building and maintaining mission-critical, high-volume data pipelines.

Key Features

- Low-code Cloud Studio: Drag-and-drop components compile into native SQL, Spark, or ELT code, speeding up pipeline development without compromising performance.

- CLAIRE AI Recommendations: Machine learning–powered suggestions help with field mappings, transformation logic, and resource optimization to accelerate and standardize development.

- Intelligent Data Catalog & Lineage: Automated data discovery, profiling, and column-level lineage allow teams to quickly find data and trace every change for audit and compliance needs.

- Built-in Data Quality & Privacy: Real-time validation rules, data masking, and policy enforcement ensure clean, compliant data throughout the pipeline.

- Hybrid & Multi-cloud Execution: Deploy the same pipeline across AWS, Azure, GCP, on-prem Hadoop, or Spark environments without code changes, avoiding vendor lock-in.

- Role-based Governance & Version Control: Fine-grained access controls and Git integration support secure, collaborative development at scale.

Pricing

- Quote-based pricing for Informatica Intelligent Data Management Cloud

Pros

- Comprehensive platform for large-scale needs

- Strong focus on data governance

- Automation with AI-based insights

Cons

- Expensive for mid-size teams

- Requires time and training to fully utilise

What People Say

7. Alteryx

Alteryx Designer is a self-service data analytics platform that lets analysts build complex data preparation, blending, and transformation workflows through a drag-and-drop interface without writing code. It is best suited for business analysts and data teams who need to move fast without relying on engineering support.

What sets Alteryx apart is its ability to handle the full analytics workflow in one place, from data preparation and transformation to predictive and spatial analytics, all without switching tools or writing a single line of code.

Key Features

- Drag-and-Drop Workflow Designer: Build transformation pipelines visually using 280+ pre-built tools for filtering, joining, aggregating, and enriching data

- Predictive and Spatial Analytics: Run regression models, clustering, and spatial analysis directly within the same workflow

- Auto Insights: AI automatically surfaces patterns, anomalies, and trends in your data, reducing time spent on manual exploration

- Broad Connectivity: Connect natively to databases, cloud platforms, spreadsheets, and SaaS applications through pre-built connectors

Pricing

- Alteryx Designer Cloud: Starts at $4,950 per user per year

- Enterprise: Custom pricing with advanced governance and collaboration features

- Free trial available

Pros

- No coding required, accessible to business analysts and non-technical users

- Handles complex blending, transformation, and analytics in a single workflow

- Strong community with an extensive library of pre-built workflows

Cons

- Cloud version is less mature compared to the desktop Designer

- Expensive compared to open-source alternatives, especially at scale

- Performance can slow down with very large datasets on local machines

Open Source Transformation Tools

- Free and customizable software that you use to transform data.

- They let you modify and improve the tool’s underlying source code to fit your needs.

- With community support, you can find solutions and add new features.

- These tools help you manage data without the cost of commercial software.

8. dbt

dbt (Data Build Tool) is an open-source transformation framework and accompanying cloud platform that focuses exclusively on the “T” in ELT. It enables teams to write modular SQL models that run natively inside modern cloud data warehouses such as Snowflake, BigQuery, Redshift, Databricks, and others.

By treating analytics code like software, dbt introduces version control, automated testing, documentation, and a Directed Acyclic Graph (DAG) to manage dependencies. This enables analytics engineers, data analysts, and data scientists to collaborate more safely and deploy changes with confidence.

Teams choose dbt because it keeps transformations close to the data while layering on advanced capabilities. These include a governed Semantic Layer for consistent metrics, cross-platform dbt Mesh for multi-project governance, and AI-powered tools like Canvas and Explorer. The result is a unified, SQL-first workflow that blends engineering rigor with self-service analytics.

Key Features

- SQL + Jinja Templating: Write reusable, parameterized SELECT statements that dbt compiles into warehouse-specific SQL at runtime.

- Built-in Tests and Documentation: Define tests and documentation in code. dbt runs these tests on every build and automatically generates a searchable documentation site.

- Directed Acyclic Graph (Lineage Views): dbt maps model dependencies into an interactive DAG, helping teams trace data flow and assess impact before deployment.

- Semantic Layer Powered by MetricFlow: Define business metrics centrally and query them consistently across BI tools like Power BI and Looker.

- dbt Mesh for Multi-Project Governance: Coordinate, test, and share models across teams and data platforms while maintaining team autonomy.

- Visual Canvas & Explorer: Use drag-and-drop model building and interactive lineage exploration to lower the barrier for analysts and speed up debugging.

Pricing

- Free open-source version

- Cloud starts at $100 per developer/month

Pros

- Clean, testable transformations

- Encourages best practices

- Supported by a strong community

Cons

- Doesn’t help with data extraction or loading

- Takes time to learn for new users

What People Say

9. Apache NiFi

Apache NiFi is an open-source, flow-based automation platform that lets you design, run, and tweak streaming pipelines in real time. Its drag-and-drop canvas, 400-plus pre-built “Processors,” and live queue controls mean you can enrich, split, route, or filter data while it’s still in motion.

What makes NiFi distinct is its interactive, real-time control; users can inspect data queues between processors and adjust logic on the fly. Furthermore, its built-in data provenance automatically records a detailed history of every piece of data, tracking every transformation and routing decision, which is invaluable for debugging and auditing.

Apache NiFi is best suited for data engineers and operations teams who need to manage and transform high-volume, continuous streams of data. It excels in scenarios like ingesting log data and standardizing it before storage, routing IoT sensor data based on its content, or performing lightweight data cleansing as part of a larger data distribution network.

Key Features

- Visual Flow Canvas & 400+ Processors: Design pipelines visually by wiring together processors like SplitJSON, ExecuteScript, PutKafka, or DetectImageLabels, no coding needed.

- Real-Time Back-Pressure & Queue Inspection: Set thresholds on object count or size, monitor queues in real time, and dynamically throttle or reroute data flow as needed.

- End-to-End Data Provenance & Replay: Track every FlowFile’s journey through the system and replay any step for auditing, debugging, or recovery.

- Python Processors & Jupyter-Style Scripting (v2.0): Embed Python code, including libraries like Pandas or Torch, directly within processors for flexible, script-based transformations.

- Cluster, Stateless & Kubernetes Operator Support: Scale from a single machine to a distributed cluster, or deploy stateless flows as microservices in Kubernetes.

- Enterprise-Grade Security: Ensure secure operations with TLS encryption, fine-grained role-based access control, and integration with OIDC, SAML, LDAP, and flow-level policies.

Pricing

- Core project: Apache Licence 2.0 free to download and run.

- Commercial options: Cloudera Flow Management (CFM) wraps NiFi with support and cloud billing (contact sales for CCU-based pricing). cloudera.comcloudera.com

Pros

- Intuitive, visual design accelerates build time.

- Live edits, back-pressure, and provenance give ops-grade observability.

- Strong security stack and deployment flexibility (bare metal, VM, K8s, serverless).

Cons

- Memory tuning is essential on very large, bursty streams.

- Heavy analytics often require an external engine (Spark, Flink) or custom script.

- Debugging complex nested Process Groups can be daunting for newcomers.

What People Say

10. Airbyte

Airbyte is an open-source data integration platform built to automate the movement of data between systems. It is best known for its extensive library of pre-built connectors that simplify extracting data from hundreds of sources and loading it into destinations like data warehouses and databases.

Its standout feature is its open-source, connector-centric architecture. While it offers a vast catalog of pre-built connectors, anyone can build new ones using its Connector Development Kit (CDK) or even a no-code builder.

Airbyte is designed for teams that need to reliably replicate data from various sources like APIs, databases, and SaaS applications into a centralized repository.

Key Features

- Large Library of Open-Source Connectors: A marketplace of over 600 pre-built, community-maintained connectors allows you to ingest data from SaaS applications, databases, files, and event streams without writing custom integration code.

- Seamless Integration with dbt: Built-in hooks can trigger dbt Core or dbt Cloud runs immediately after a sync completes, enabling a fully automated flow from raw data ingestion to transformation.

- Cloud and Self-Hosted Deployment Options: Choose between the fully managed Airbyte Cloud or deploy the open-source version on your own Kubernetes cluster, virtual machine, or local environment for complete control and data sovereignty.

- Self-Service Debugging and Connector Modification: The Python-based Connector Development Kit, detailed logging, and one-click reset tools empower engineers to build, test, and modify connectors without relying on vendor updates.

- Automated Schema Drift Detection and Handling: Airbyte checks for schema changes before each sync, alerts on added or removed columns, and guides users through updating mappings to keep data pipelines stable and reliable.

Pricing

- The open-source version is free to self-host

- Airbyte Cloud uses a credit-based, pay-as-you-go model. Pricing scales with the volume of data synchronized, with one credit ($2.50) covering approximately 250 MB of database data or ~167,000 API rows.

Pros

- Vast and growing library of connectors saves significant development time

- Open-source model allows for customization and avoids vendor lock-in

- Strong integration with dbt for handling the “T” in ELT

- Simple, usage-based pricing for the cloud version

Cons

- Does not perform data transformation natively; requires a separate tool

- Self-hosting requires infrastructure management and maintenance

- Connector quality and maturity can vary, especially for less common sources

- Can be resource-intensive when running many concurrent syncs

What People Say

Custom Transformation Solutions

- You can build these solutions from scratch for your business’s specific use cases.

- Lets you work with developers to create tools that perfectly fit your data processes.

- These solutions offer flexibility and precise functionality, effectively helping you handle unique data challenges.

11. Python

Python is a general-purpose, high-level language whose clean syntax and enormous package ecosystem turn it into the Swiss-army knife of data work. Libraries such as pandas, Polars, PyArrow, Dask, scikit-learn, and PySpark let you code transforms that GUI ETL tools struggle withoutlier imputation, fuzzy matching, ML-driven feature engineering, you name it.

The 2025 releases of Python 3.13 (experimental no-GIL build and a brand-new colour REPL) and pandas 3.0 (Arrow-backed columns, copy-on-write by default) push performance forward without changing the language’s gentle learning curve.

That blend of flexibility and power makes Python the go-to for data scientists, analytics engineers, and platform teams who need full control over complex, evolving pipelines.

Key Features

- Rich Library Ecosystem: Access powerful tools like pandas for tabular wrangling, NumPy for vector math, scikit-learn for machine learning, PyArrow for zero-copy columnar data, and Polars for blazing-fast, Rust-backed DataFrames, plus 400K+ packages on PyPI.

- DataFrame Semantics: Use pandas and Polars DataFrame APIs to chain filters, joins, and window operations in clear, readable one-liners. With pandas 3.0, Arrow memory sharing brings significant performance gains.

- First-Class Cloud & Database Hooks: Integrate seamlessly with SQLAlchemy, DuckDB, psycopg, boto3, and cloud SDKs for GCP and Azure, enabling in-place transformations and cross-cloud orchestration from a single script.

- Unbounded Extensibility: Write custom UDFs for Spark, Snowflake, BigQuery, and Postgres. Embed high-performance C, C++, or Rust code using Cython or PyO3 when native speed is critical.

Pricing

- Language & libraries: Completely free and open source.

- Runtime costs: Only the compute you execute on local CPU/GPU, on-prem clusters, or cloud instances (e.g., AWS EC2, Azure Batch, GCP Cloud Run).

Pros

- Unlimited flexibility for bespoke logic and niche file formats.

- Vast ecosystem spanning ETL, ML, viz, APIs, orchestration.

- Huge talent pool; easy to hire and ramp up.

- Works everywhere from Jupyter notebooks to Airflow DAGs to Lambda functions.

- Massive community and learning resources

Cons

- Steeper learning curve than drag-and-drop tools; must write code.

- Single-machine pandas workloads can hit RAM ceilings; you may need Polars, DuckDB, Dask, or Spark for big data.

- No native visual pipeline monitor, you rely on notebooks, logs, or an orchestrator (Airflow, Dagster, Prefect).

- Dependency management (virtualenvs, Conda, poetry) can trip up large teams if not standardised.

12. Google BigQuery Dataform

Google BigQuery Dataform is a fully managed, warehouse-native transformation service built directly into Google Cloud that brings software engineering best practices to SQL workflows inside BigQuery. It is best suited for data teams already using BigQuery who want a structured, governed approach to managing transformation logic.

What makes Dataform stand out is its tight integration with the Google Cloud ecosystem. It compiles SQLX into BigQuery-native SQL, manages dependencies automatically, and connects directly to GitHub and GitLab for version-controlled pipeline development.

Key Features

- SQLX Templating Language: Extend standard SQL with reusable includes, declarations, and assertions for cleaner, modular transformation logic

- Automatic Dependency Resolution: Builds and executes tables in the correct order based on defined relationships, eliminating manual sequencing

- Built-in Data Quality Assertions: Validate outputs at every step before downstream tables are populated with bad data

- Native Version Control Integration: Connect directly to GitHub, GitLab, or Cloud Source Repositories for fully tracked pipeline development

Pricing

- Dataform itself is free to use

- Costs are based on BigQuery query execution and storage only

- No additional licensing or infrastructure fees

Pros

- Fully managed with no infrastructure to set up or maintain

- Native BigQuery integration delivers optimal query performance and cost efficiency

- Version control and CI/CD integration are built in out of the box

Cons

- Limited support for non-SQL transformations or Python-based logic

- Tightly coupled to BigQuery, not suitable for multi-warehouse environments

- Less mature than dbt with a smaller community and ecosystem

How Do You Choose the Right Data Transformation Tool?

1. Integration Capabilities

Ensure the tool seamlessly integrates with your existing data sources, destinations, and infrastructure. Compatibility with various databases, cloud services, and APIs is essential for smooth data flow.

2. Scalability and Performance

Assess whether the tool can handle your current data volume and scale as your data grows. Performance metrics, such as processing speed and resource utilisation, are crucial for large datasets.

3. User Interface and Usability

A user-friendly interface can significantly reduce the learning curve. Tools offering visual workflows or drag-and-drop features can be beneficial for teams with limited coding expertise.

4. Data Quality and Validation Features

Robust data validation, cleansing, and profiling features help maintain data integrity and reliability throughout the transformation process.

5. Security and Compliance

Ensure the tool adheres to security standards and compliance regulations relevant to your industry, such as GDPR or HIPAA. Features like data encryption and access controls are vital.

6. Cost and Licensing

Evaluate the total cost of ownership, including licensing fees, maintenance, and potential scalability costs. Open-source tools may offer cost advantages, but consider the support and community activity.

Check out our blog on data transformation best practices to learn how to standardize and optimize your data for seamless integration and analysis.

How Do Data Transformation Tools Work?

Data transformation tools typically follow a structured process to convert raw data into a usable format:

1. Data Extraction

The tool connects to various data sources (databases, APIs, flat files) to retrieve raw data for processing.

2. Data Profiling and Discovery

Analysing the data to understand its structure, quality, and content. This step helps in identifying anomalies, missing values, and data types.

3. Data Mapping

Defining how data fields from the source correspond to those in the destination. This involves setting transformation rules and relationships between datasets.

4. Data Transformation

Applying various operations such as filtering, aggregating, joining, and converting data types to meet the desired format and structure.

5. Data Validation and Quality Checks

Implementing rules to ensure data accuracy and consistency. This may include checking for duplicates, validating data ranges, and ensuring referential integrity.

6. Data Loading

The transformed data is then loaded into the target system, such as a data warehouse, for further analysis or reporting.

7. Monitoring and Logging

Continuous monitoring of the transformation process to detect errors, performance issues, and to maintain logs for auditing purposes.

The Right Transformation Tool Changes Everything

Bad data does not just slow down your analytics team. It erodes trust in dashboards, delays decisions, and wastes engineering hours on fixes that should never have been needed in the first place.

The tools in this guide solve that problem, but they do it in very different ways. If you need enterprise governance and multi-cloud support, Informatica and Talend are built for that. If you need SQL-first warehouse transformations, dbt and BigQuery Dataform are the natural fit. If you need open-source flexibility, Airbyte and Python give you full control.

But if your team’s core need is reliable, real-time data that is always clean, always consistent, and always ready for analysis without managing pipelines, infrastructure, or transformation scripts, Hevo is the simplest path to get there.

Try Hevo free for 14 days and see how fast clean data can reach your warehouse.Get Started with Hevo for Free

Conclusion

If you’ve ever opened a raw dataset and felt a little overwhelmed, that’s exactly where transformation tools come in. They turn all that chaos into clean, structured tables your team can actually work with. And depending on your setup and skill levels, the “right” tool will look different.

Some teams love the simplicity of no-code tools like Hevo because real-time updates come without extra engineering effort. Others stick to Python, dbt, or enterprise platforms like Qlik Compose or IBM DataStage when things get heavier or more regulated.

In the end, picking the right tool doesn’t just make your data cleaner. It makes your entire business faster.

FAQs

What Are Data Transformation Tools?

Data transformation tools are software that convert raw, messy data into clean, structured, and analysis-ready formats. They handle tasks like filtering, joining, aggregating, and reformatting data as part of an ETL or ELT workflow, so your analytics teams always have accurate, consistent data to work with.

What is the difference between ETL and ELT in data transformation?

ETL (Extract, Transform, Load) transforms data before loading it into the warehouse, while ELT (Extract, Load, Transform) loads raw data first and transforms it inside the warehouse using its compute power. Most modern tools like dbt, Matillion, and Hevo follow the ELT approach because cloud warehouses like Snowflake and BigQuery are powerful enough to handle transformations at scale, making ELT faster and more cost-efficient than traditional ETL.

How do AI and ML help with data transformation?

Machine-learning models can profile incoming datasets to suggest type casts, fuzzy-match dimensions, and flag outliers far faster than manual rules. More advanced systems learn mapping patterns across pipelines, auto-generate transformation code or SQL, and continuously retrain on ground-truth corrections reducing the tedium of data wrangling while absorbing domain knowledge at scale.

Do I need coding skills to use data transformation tools?

It depends on the tool. No-code platforms like Hevo, Alteryx, and Matillion let you build transformation workflows through visual interfaces without writing code. Code-based tools like dbt and Python require SQL or programming knowledge but offer more flexibility and control. The right choice depends on your team’s technical expertise and the complexity of your transformation logic.

Can I use multiple data transformation tools together?

Yes, and most modern data stacks do. A common setup is using Fivetran or Hevo to move data into the warehouse, dbt to handle SQL transformations, and Great Expectations for data quality validation. Each tool handles a specific layer of the pipeline, and they are designed to work together rather than replace each other.