So, you’re an Adroll user, right? It’s nice to talk to someone who prioritizes retargeting their customers in a unified platform. Your focus on growing revenue and acquiring customers optimally is commendable.

At times, you would need to move your marketing data from Adroll to a data warehouse. That’s where you come in. You take responsibility for replicating data from Adroll to a centralized repository. By doing this, the analysts and key stakeholders can make super-fast business-critical decisions.

Give a high-five! We’ve prepared a simple and straightforward guide without beating around the bush. Leap forward and read the two simple methods for performing the data replication from Adroll to Snowflake.

Table of Contents

What is AdRoll?

AdRoll is a powerful digital marketing platform that specializes in retargeting and cross-channel advertising. It shows deep insights into how customers are behaving and interacting across many channels, such as email, social media, and even display advertising. This way, businesses can better manage and optimize ad campaign activities across channels. Using AdRoll, businesses can ensure the right customer appears at the right time to maximize the efficiency of ad spend and conversion.

Also Read: AdRoll to BigQuery

What is Snowflake?

Developed in 2012, Snowflake is a fully managed data warehouse, which can be hosted on any cloud service like Amazon Cloud, Microsoft Azure, and Google Data Storage. It is a single platform that offers a wide range of services such as data engineering, data warehousing, and data lakes.

Snowflake provides flexibility in the pricing model that helps businesses to pay independently for computation and storage. It also allows companies to leverage on-demand storage where they can pay a monthly upfront payment.

Check out the Best Snowflake BI and Reporting Tools in 2025.

Method 1: Replicate Data from AdRoll to Snowflake Using CSV Files.

This approach involves manually exporting your data from AdRoll as CSV files and loading them into Snowflake for further processing.

Method 2: Replicate Data from Adroll to Snowflake Using an Automated ETL Tool

Leverage Hevo’s no-code platform to automate the entire data integration process from AdRoll to Snowflake, ensuring real-time data availability without the hassle of manual intervention.

Method 1: Replicate Data from Adroll to Snowflake Using CSV Files

Follow along to replicate data from Adroll to Snowflake in CSV format:

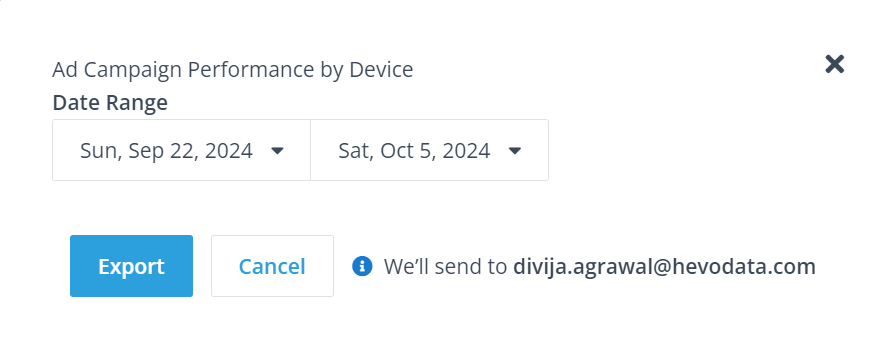

Step 1: Export CSV Files from Adroll

- Go to the Campaign Reports tab in Adroll.

- Open the Adroll report from which you want to export data.

- Click on the “Export” button in the top-right corner. Then, choose the “CSV” format.

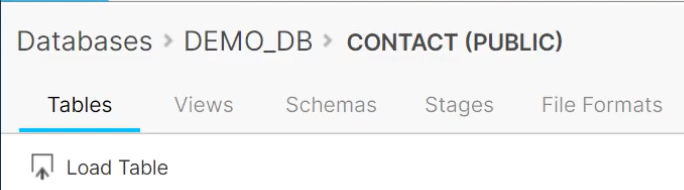

Step 2: Launch the Snowflake Load Data Wizard

- From the Snowflake dashboard, click on Databases.

- Select a particular database by clicking on its link.

- Click the Tables tab.

- You should see a list of table names. Click a table name to reveal the table details page.

- Next, click the Load Table button.

This will launch the Load Data Wizard. The wizard provides a convenient way of loading data into a Snowflake table from flat files (e.g., CSV, TSV, etc.) using PUT and COPY commands behind the scenes.

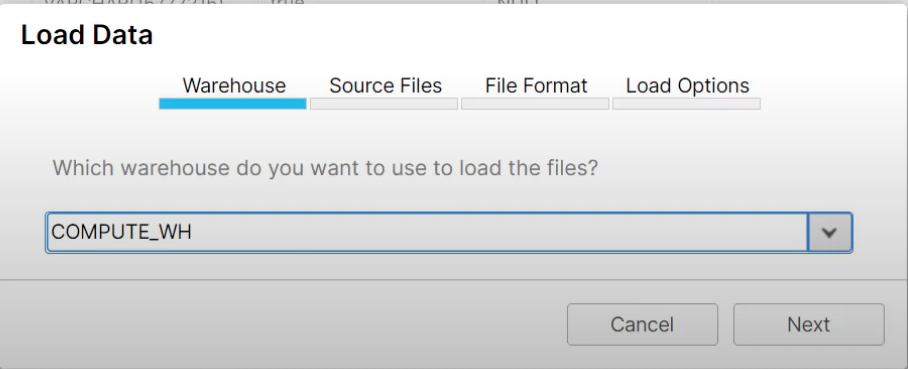

Step 3: Load the CSV Files

- Select a warehouse from the dropdown list. Snowflake will use this warehouse to load data into the table.

- Click Next.

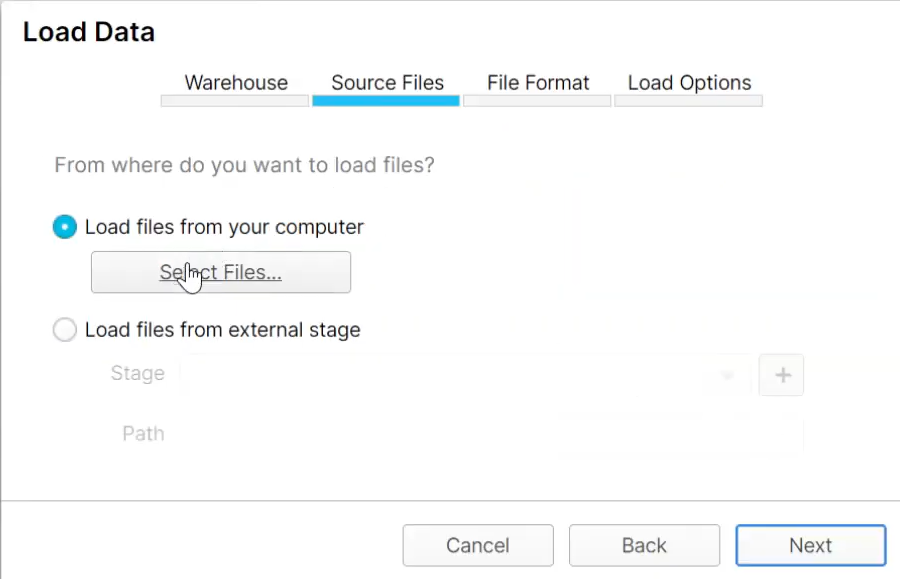

- Select the Load files from your computer option.

- Click the Select Files button.

- Select the CSV files you exported from AdRoll.

- After selecting the files, click on the Open button.

- Finally, click on the Next button.

This will launch a dropdown list that will allow you to describe the format of your files.

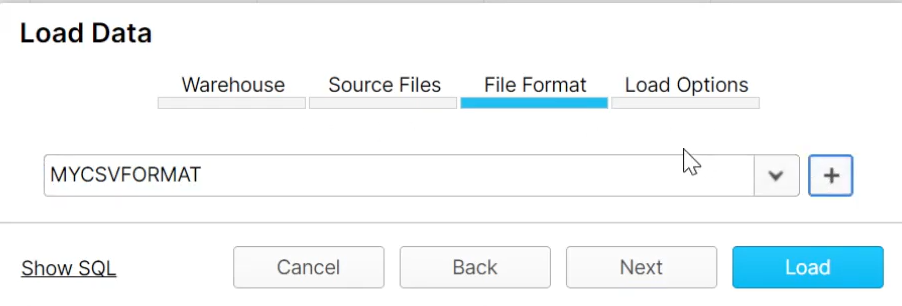

Step 4: Create a File Format

- Click the plus (+) symbol next to the dropdown list.

- Fill in the fields to match the format of your CSV files.

- After filling the fields, click on the Finish button.

- Select your named file format from the dropdown list.

- Click the Next button.

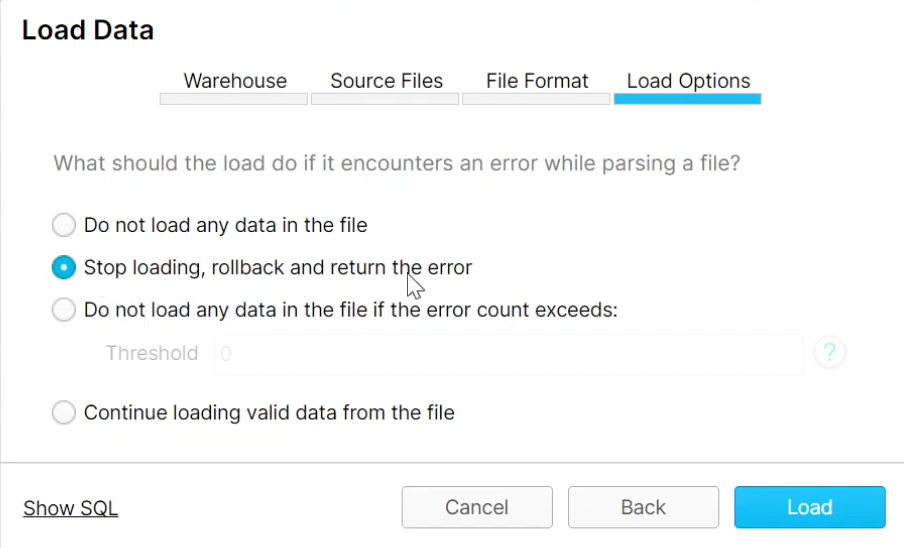

Step 5: Select the Load Options

In this section, you will specify how Snowflake should behave if errors in the data files are encountered. The supported values are CONTINUE, SKIP_FILE, and ABORT_STATEMENT.

Step 6: Load the Data

- Click on the Load button to load your CSV files into your Snowflake table.

- Click the OK button to close the data wizard.

The above 6-step guide replicates data from Adroll to Snowflake effectively. It is optimal for the following scenarios:

- Less Amount of Data: This method is appropriate for you when the number of reports is less. Even the number of rows in each report is not huge.

- One-Time Data Replication: This method suits your requirements if your business teams need the data only once in a while.

- Limited Data Transformation Options: Manually transforming data in CSV files is difficult & time-consuming. Hence, it is ideal if the data in your spreadsheets is clean, standardized, and present in an analysis-ready form.

- Dedicated Personnel: If your organization has dedicated people who have to perform the manual downloading and uploading of CSV files, then accomplishing this task is not much of a headache.

Limitations of Manual Method

- However, when the frequency of replicating data from AdRoll increases, this process becomes highly monotonous.

- It adds to your misery when you have to transform the raw data every single time.

- With the increased data sources, you would have to spend a significant portion of your engineering bandwidth creating new data connectors.

Just imagine — building custom connectors for each source, transforming & processing the data, tracking the data flow individually, and fixing issues. Doesn’t it sound exhausting?

Method 2: Replicate Data from Adroll to Snowflake Using an Automated ETL Tool

Why not explore an automated data pipeline solution? For instance, here’s how Hevo, a cloud-based ETL solution, makes the data replication from Adroll to Snowflake ridiculously easy:

Step 1: Configure Adroll as your Source

- Fill in the attributes required to configure Adroll as your source.

Step 2: Configure Snowflake as your Destination

- Now, you need to configure Snowflake as the destination.

After implementing the 2 simple steps, Hevo will take care of building the pipeline for replicating data from Adroll to Snowflake based on the inputs given by you while configuring the source and the destination.

For in-depth knowledge of how a pipeline is built & managed in Hevo, you can also visit the official documentation for Adroll as a source and Snowflake as a destination.

You don’t need to worry about security and data loss. Hevo’s fault-tolerant architecture will stand as a solution to numerous problems. It will enrich your data and transform it into an analysis-ready form without having to write a single line of code. Here’s what makes Hevo stand out:

- Fully Managed: You don’t need to dedicate time to building your pipelines. With Hevo’s dashboard, you can monitor all the processes in your pipeline, thus giving you complete control over it.

- Data Transformation: Hevo provides a simple interface to cleanse, modify, and transform your data through drag-and-drop features and Python scripts. It can accommodate multiple use cases with its pre-load and post-load transformation capabilities.

- Faster Insight Generation: Hevo offers near real-time data replication, giving you access to real-time insight generation and faster decision-making.

- Schema Management: With Hevo’s auto schema mapping feature, all your mappings will be automatically detected and managed to the destination schema.

- Scalable Infrastructure: With the increased number of sources and volume of data, Hevo can automatically scale horizontally, handling millions of records per minute with minimal latency.

- Transparent pricing: You can select your pricing plan based on your requirements. Different plans are clearly put together on its website, along with all the features it supports. You can adjust your credit limits and spend notifications for any increased data flow.

- Live Support: The support team is available round the clock to extend exceptional support to its customers through chat, email, and support calls.

Key Advantages of Connecting AdRoll to Snowflake

Here’s a little something for the data analyst on your team. We’ve mentioned a few core insights you could get by replicating data from Adroll to Snowflake. Does your use case make the list?

- How are Paid Sessions and Goal Conversion Rates varying with Marketing spending and Cash in-flow?

- How do you identify your most valuable customer segments?

- What is the Marketing Behavioural profile of the Product’s Top Users?

Summing It Up

Exporting & uploading CSV files is your go-to solution when your data & financial analysts require fresh data from AdRoll only once in a while. However, with an increase in frequency, redundancy will also increase. To channel your time into productive tasks, you can opt-in for an automated solution that will help accommodate regular data replication needs. This would be genuinely helpful to marketing teams as they would need regular updates about advertising expenses, support costs of campaigns, site activity, etc.

So, take a step forward. And Hevo will help you build an automated no-code data pipeline in a hassle-free manner. Its 150+ plug-and-play native integrations will help you replicate data smoothly from multiple tools to a destination of your choice.

Skeptical? Why not try Hevo for free and make the decision yourself? Using Hevo’s 14-day free trial feature, you can build a data pipeline from Adroll to Snowflake and try out the experience.

Here’s a short video that will guide you through the process of building a data pipeline with Hevo.

We hope you have found the appropriate answer to the query you were searching for. Happy to help!

FAQs

1. How do I migrate a database to a Snowflake?

The classic way to import a database into Snowflake is to export data from the source database, transform it where applicable, and load it in. Many tools can automate this process and handle huge data volumes, such as the Snowpipe service, the Data Migration Service, and third-party ETL/ELT tools like Hevo.

2. Is Snowflake ELT or ETL?

Snowflake supports any type of ETL or ELT process but favors ELT instead because it’s a very strong computing environment, where it can load raw data first and then transform it inside Snowflake.

3. Is Snowflake just a data warehouse?

While Snowflake is widely known in many quarters as a cloud data warehouse, it also extends far beyond the basic features of a traditional data warehouse: it supports data lakes, data sharing, and advanced analytics.