Unlock the full potential of your AfterShip data by integrating it seamlessly with BigQuery. With Hevo’s automated pipeline, get data flowing effortlessly—watch our 1-minute demo below to see it in action!

AfterShip is a third-party shipment tracking service that connects to e-commerce platforms to obtain and notify the real-time status of the ordered products from multiple shipping companies. It is an alternative to the manual procedure of tracking shipments for medium-sized to large businesses that deliver thousands of orders daily. You can manage logistics with AfterShip using an interactive dashboard that tracks delivery rate, package acceptance rate, and courier performance while offering insights into shipping and post-purchase performance.

By connecting it to a cloud data warehouse like BigQuery, you can do advanced analytics using SQL-like queries on vast quantities of tracking and shipping data received from various e-commerce platforms. These analyses will provide insights into ways to improve your company’s performance.

In this article, you will learn how to connect AfterShip to BigQuery in two easy methods and the limitations of performing the connection.

Table of Contents

Prerequisites

- Fundamental understanding of integration

What is AfterShip?

AfterShip is a web-based application that keeps consumers informed about the status of their online orders. Businesses can leverage the automated features of this platform to improve in-order processing, marketing, sales, and delivery. Once you connect it to your e-commerce or internet business, it immediately integrates all of your store’s tracking numbers.

The user interface enables manual tracking number input and combines your e-commerce company’s tracking numbers with the client information, such as phone numbers and email addresses. Regular email notifications are delivered to customers whenever there is an update on their order. Due to this, they can keep track of everything they own and stay in touch with their carrier partners in the event of delays or missing commodities.

Key Features of AfterShip

- Shipping Visibility: Customers expect businesses to inform them about their purchases’ progress by sending them shipping alerts once the order is confirmed. With AfterShip, you can send emails, SMS, and Facebook updates from when an item ships until it reaches the customer’s door. To keep your customers updated about the delivery events, you can choose to send notifications to clients, email subscribers, or yourself.

- Returns: AfterShip has a self-service returns management portal that provides consumers with a better return experience. Under this portal, you can supervise all of your returns and expedite the return process. To reduce queries and increase customer satisfaction, AfterShip offers proactive information on the progress of returns. With this platform, you can plan out intelligent routing rules that help you return items at the lowest possible cost.

What is BigQuery?

BigQuery is a data warehouse hosted on the Google Cloud Platform that helps enterprises with their analytics activities. This Software as a Service (SaaS) platform is serverless and has outstanding data management, access control, and machine learning features (Google BigQuery ML). Google BigQuery excels in analyzing enormous amounts of data and quickly meets your Big Data processing needs with capabilities like exabyte-scale storage and petabyte-scale SQL queries.

BigQuery’s columnar storage makes data searching more manageable and effective. On the other hand, the Colossus File System of BigQuery processes queries using the Dremel Query Engine via REST. The storage and processing engines rely on Google’s Jupiter Network to quickly transport data from one location to another.

Key Features of BigQuery

- Fully Managed: An in-house setup is not required since Google BigQuery is a fully managed data warehouse. To use BigQuery, you only need a web browser to log in to the Google Cloud project. By offering serverless execution, Google BigQuery takes care of complicated setup and maintenance processes, including Server/VM Administration, Server/VM Sizing, Memory Management, etc.

- Exceptional Performance: Due to the column-based design, Google BigQuery provides several advantages over traditional row-based storage, like higher storage efficiency and quicker ability to scan data. These features minimize slot consumption, querying time, and data use by supporting nested tables for practical data storage and retrieval.

- Security: BigQuery offers Column-level protection, verifies identity and access status, and establishes security policies as all data is encrypted and in transit by default. Since it is a component of the Google Cloud ecosystem, it complies with security standards like HIPAA, FedRAMP, PCI DSS, ISO/IEC, SOC 1, 2, and 3.

- Partitioning: Google BigQuery’s decoupled Storage and Computation architecture employ column-based segmentation to lower the quantity of data retrieved from discs by slot workers. Once the slot workers have finished reading their data from the disc, Google BigQuery automatically finds the most optimum data sharing method and instantly repartition data using its in-memory shuffle function.

AfterShip has proven abilities to manage high data volumes, fault tolerance and durability. Also, BigQuery is a data warehouse known for ingesting data instantaneously and perform almost real-time analysis. When integrated together, moving data from AfterShip to Bigquery could solve some of the biggest data problems for businesses. In this article, we have described two methods to achieve this:

Method 1: Connect Aftership to BigQuery using Hevo

Hevo Data, an Automated Data Pipeline, provides you a hassle-free solution to connect AfterShip to BigQuery within minutes with an easy-to-use no-code interface. Hevo is fully managed and completely automates the process of not only loading data from Aftership but also enriching the data and transforming it into an analysis-ready form without having to write a single line of code.

GET STARTED WITH HEVO FOR FREEMethod 2: Connect Aftership to BigQuery using Batch Loading

This method would be time consuming and somewhat tedious to implement. Users will have to write custom codes to enable two processes, streaming data from AfterShip and ingesting data into BigQuery. This method is suitable for users with a technical background.

Both the methods are explained below.

Methods to Connect AfterShip to BigQuery

Many organizations need to load their data from AfterShip to the BigQuery service to access raw customer data, like shipment types, item checkpoints, etc. Take advantage of Google BigQuery’s capability to efficiently run complex analytical queries across petabytes of data. Connecting AfterShip data with BigQuery provides a more comprehensive insight into your customer interaction and company’s performance.

Furthermore, with the AfterShip to BigQuery integration, you can perform effective real-time automated processes, saving you time when working on repetitive tasks. This integration is the ideal value addition for an e-commerce company or business owner who wants to improve operations, increase efficiency, and sync data throughout their workspace.

To connect AfterShip to BigQuery, you can use several methods like batch loading a set of records, streaming individual/groups of data, or third-party software/services. In addition, you can use queries to generate new data by adding to or replacing existing data in a database.

There are two methods explained below:

- Method 1: Connect AfterShip to BigQuery using Hevo

- Method 2: Connect AfterShip to BigQuery using Batch Loading

Method 1: Connect AfterShip to BigQuery using Hevo

Hevo provides Google Bigquery as a Destination for loading/transferring data from any Source system, which also includes AfterShip.

Configure AfterShip as a Source

To configure AfterShip as the Source in your Pipeline in AfterShip to BigQuery Connection, perform these steps:

- In the Asset Palette, choose PIPELINES.

- In the Pipelines List View, click + CREATE.

- Select AfterShip on the Select Source Type page.

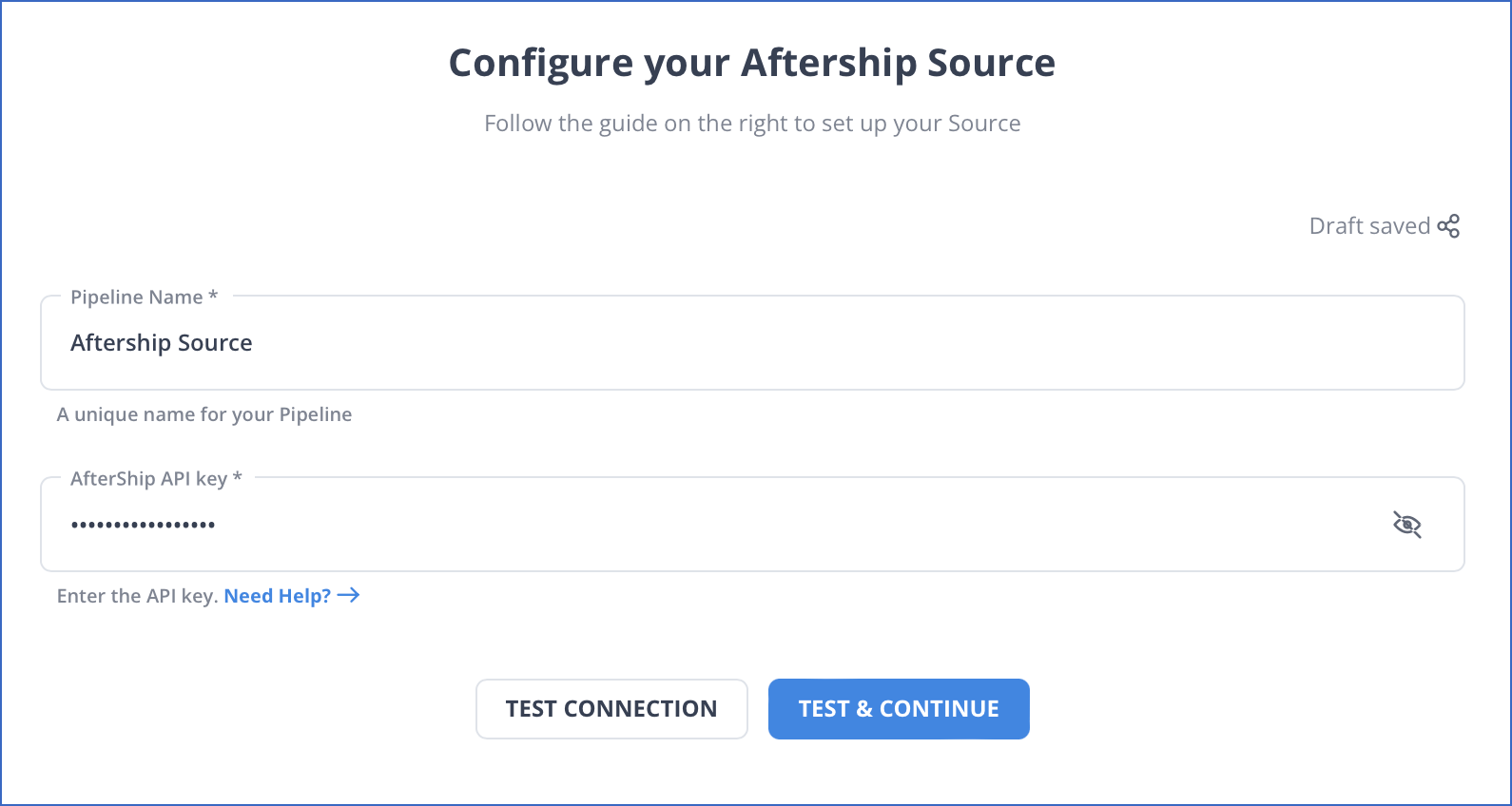

- In the Configure your AfterShip Source page, provide the following information:

- Pipeline Name: A unique name for the pipeline that should not be longer than 255 characters.

- API Key: The API key is retrieved from your AfterShip account.

- Click on TEST & CONTINUE.

- Continue by configuring the Data Ingestion and setting up the Destination.

Configure BigQuery as a Destination

To configure BigQuery as a Destination for AfterShip to BigQuery Connection, follow these steps:

- In the Asset Palette, choose DESTINATIONS.

- In the Destinations List View, click + CREATE.

- Select Google BigQuery as the Destination type on the Add Destination page.

- Select the authentication method for connecting to BigQuery on the Configure your Google BigQuery Account page.

- Perform one of the following:

- To connect with a Service Account, follow these steps:

- Attach the Service Account Key file.

- Click on CONFIGURE GOOGLE BIGQUERY ACCOUNT.

- To join using a User Account, follow these steps:

- Click on + ADD A GOOGLE BIGQUERY ACCOUNT.

- Sign in as a user with BigQuery Admin and Storage Admin permissions.

- Provide Hevo access to your data by clicking Allow.

- To connect with a Service Account, follow these steps:

- Configure your Google BigQuery Warehouse page with the following information to perform AfterShip to BigQuery Connection:

- Destination Name: Give your Destination a distinctive name.

- Project ID: The BigQuery instance’s Project ID.

- Dataset ID: The dataset’s name.

- GCS Bucket: A cloud storage bucket where files must be staged before being transferred to BigQuery.

- Sanitize Table/Column Names: Select this option to replace any non-alphanumeric characters and spaces in table and column names with an underscore (_).

- Populate Loaded Timestamp: Enabling this option adds the __hevo_loaded_at_ column to the Destination Database, indicating the time when the Event was loaded to the Destination.

- To test the connection, click TEST CONNECTION and then SAVE DESTINATION to finish the setup.

Method 2: Connect AfterShip to BigQuery using Batch Loading

The second method to perform AfterShip to BigQuery Connection is using batch loading, which involves instant loading of all the source data from AfterShip into a BigQuery database. Data sources could be in the form of a CSV file or a collection of log files. This tutorial will teach you how to load AfterShip data into Google BigQuery:

- Step 1: Open the Shipments page and then configure the filters.

- Step 2: Export shipments. You will receive the CSV file at the registered email address.

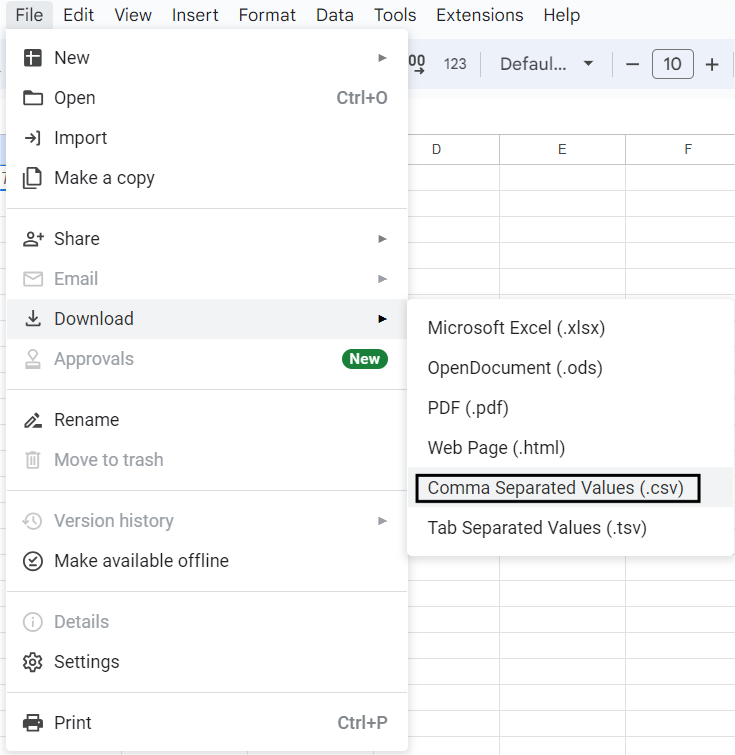

- Step 3: Before importing the AfterShip CSV file, open it in Google Sheets.

- On the top left menu, choose File.

- Click Download As, followed by Comma-Separated Values (.csv).

- Step 4: The data will be exported to CSV and downloaded to your local PC.

- Step 5: Before loading the CSV file, you will need the Identity and Access Management (IAM) permissions to load data into BigQuery to connect AfterShip to BigQuery.

The following IAM permissions are required to load data and add to or replace an existing table or partition in BigQuery.

bigquery.tables.create

bigquery.tables.updateData

bigquery.tables.update

bigquery.jobs.createEach of the predefined IAM roles listed below includes the permissions required to import data into a BigQuery table or partition:

roles/bigquery.dataEditor

roles/bigquery.dataOwner

roles/bigquery.admin (includes the bigquery.jobs.create permission)

bigquery.user (includes the bigquery.jobs.create permission)

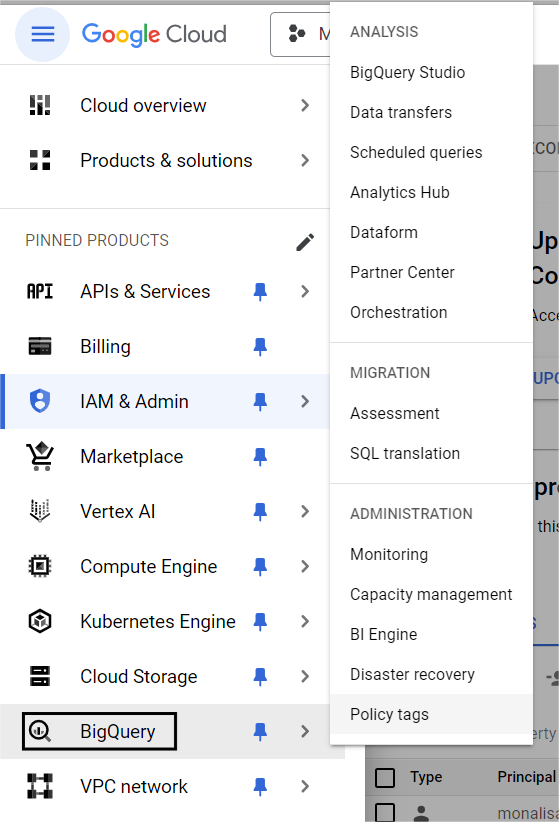

bigquery.jobUser (includes the bigquery.jobs.create permission)- Step 6: Navigate to the BigQuery section in the Cloud console to connect AfterShip to BigQuery.

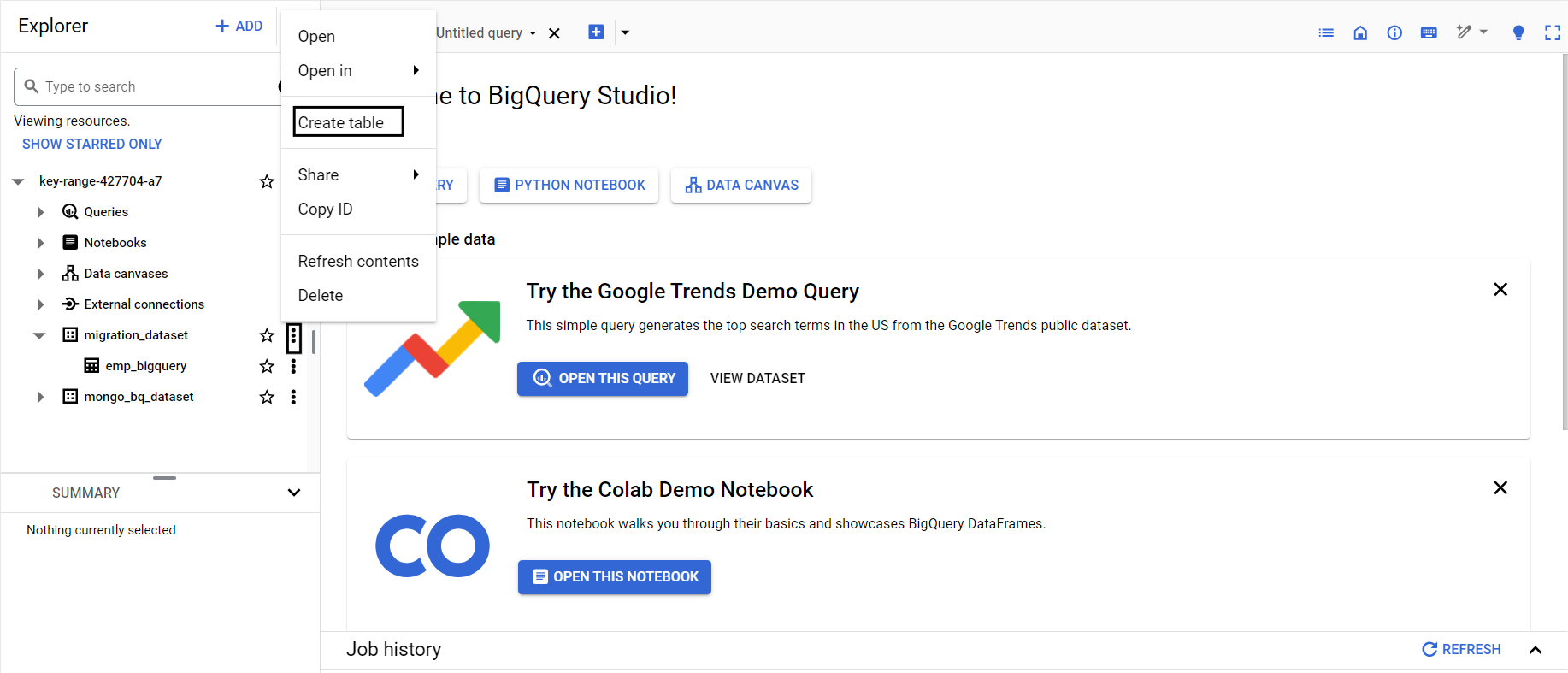

- Step 7: Before choosing a dataset, expand your project in the Explorer pane.

- Step 8: Click + Create table in the Dataset info section. Then, in the Create Table Form, select upload to do AfterShip to BigQuery Connection.

- Step 9: Enter the following information in the Create table panel:

- Under Select File, click on Browse. Scroll through the options, select the file you want to upload, and click Open.

- Choose CSV as the file format.

- Enter the following information in the Destination section:

- Under Dataset, select the dataset in which you wish to create the table.

- Enter the name of the table you wish to create in the Table area.

- Ensure to set the Table type to the Native table option.

- Step 10: Provide the schema definition in the Schema section. Choose Auto-detect to enable automated Schema detection. You can enter Schema data using any of the options given below.

- Step 11: After selecting Edit as Text, paste the Schema as a JSON array. To create a JSON schema file, follow the same steps as below to build the Schema when utilizing a JSON array. Use the below command to view the existing table’s schema in JSON format.

bq show --format=prettyjson dataset.table- Step 12: Click the + Add field to enter the table schema. Enter each field’s Name, Type, and Mode.

- Step 13: For additional configurations, click on Advanced Options.

- Step 14: Click Create Table button.

Limitations of Connecting AfterShip to BigQuery

To export data from AfterShip, you have to use Google Sheets, but few of the tracking numbers could have a “0” prefix, for example, 0123321123475820 or 0989231246738192, and these come as 123321123475820 or 989231246738192. These tracking numbers will not provide any information to the user until cleaned for data quality. To prevent this, you should use no-code/low-code platforms like Hevo Data for connecting AfterShip to BigQuery for hassle-free data transfer.

Conclusion

In this article, you understood the main features of AfterShip and Google BigQuery and learned two methods to integrate AfterShip to BigQuery. AfterShip is an automated shipping tracking service used by eCommerce companies to keep track of their shipments on a single platform. With the data from AfterShip, you can study customers’ behavior by calculating the session period, transaction clicks, and the number of visits. Google BigQuery allows you to analyze this data to find meaningful insights to improve user experience.

However, as a Developer, extracting complex data from a diverse set of data sources like Databases, CRMs, Project management Tools, Streaming Services, and Marketing Platforms to your Database can seem to be quite challenging. If you are from non-technical background or are new in the game of data warehouse and analytics, Hevo Data can help!

Visit our Website to Explore HevoHevo Data will automate your data transfer process, hence allowing you to focus on other aspects of your business like Analytics, Customer Management, etc. This platform allows you to transfer data from 100+ data sources like AfterShip to Cloud-based Data Warehouses like Snowflake, Google BigQuery, Amazon Redshift, etc. It will provide you with a hassle-free experience and make your work life much easier.

Want to take Hevo for a spin? Sign Up for a 14-day free trial and experience the feature-rich Hevo suite first hand.

You can also have a look at our unbeatable pricing that will help you choose the right plan for your business needs!

Frequently Asked Questions

1. How do I upload data to a BQ table?

You can upload data to BigQuery using the web UI, bq command-line tool, BigQuery API, or from Google Cloud Storage.

2. When to use BigQuery vs Cloud SQL?

Use BigQuery for large-scale analytics and Cloud SQL for traditional relational database applications.

3. How do I get data from cloud storage to BigQuery?

Upload files to GCS, then load them into BigQuery using the web UI, bq tool, or API.