Discover the 10 best data engineering tools in 2025, compare their features, pros, cons, and benefits, and learn why Hevo Data is the ideal choice for businesses looking for quick, reliable, and no-code data engineering.

KEY TAKEAWAYS

KEY TAKEAWAYSData engineering tools are software used to collect, process, move, and organize large sets of data for analysis or use in applications. Here are the major types of data engineering tools:

Data Migration Tools

These tools help move data from one system, storage, or format to another without losing or corrupting the data.

- Hevo Data: It is a no-code, real-time data migration tool. The software is ideal for businesses looking for transparent pricing and costs.

- Fivetran: It offers 500+ connectors to reduce integration overhead and engineering effort.

Data Warehousing Tools

These tools collect, store, and manage large volumes of data from different sources in a central repository for analysis and reporting.

- Snowflake: This is a cloud-based data platform that can separate storage and computing, helping users scale each independently.

- Amazon Redshift: Amazon Redshift is a cloud data warehouse tool that is ideal for fast querying on large datasets.

- Google BigQuery: This is a serverless data warehouse that runs fast SQL queries on massive datasets

Data Transformation Tools

Data transformation tools help clean, reshape, and convert raw data into usable formats using languages like SQL, Python, or frameworks like dbt.

- Python: It speeds up data cleaning, reducing preparation time for analysis using Pandas and NumPy libraries

- SQL: It offers in-database transformations to cut processing costs and ensure compliance-ready datasets.

- dbt: It offers version-controlled transformations to improve collaboration and documented data workflows.

Data Visualization Tools

These tools use data and turn it into charts, graphs, and dashboards to make insights easier to understand.

- Power BI: Power BI lets users connect to data sources, build interactive dashboards, and share reports.

- Tableau: Tableau offers real-time dashboards and advanced analytics integration to find insights faster.

Modern data stacks are getting more complex. More tools, more data sources, and more pressure to deliver insights fast. And unsurprisingly, business intelligence applications will use data worth $63.5 billion by 2028. That is like just over two years.

While this opens immense opportunities for businesses to make smart, quick decisions, the challenges are also real.

For data engineers, more data means building reliable, scalable pipelines. And they need to do it without wasting time stitching together the wrong tools. And this is where data engineering tools come in.

From managing warehouses to transforming and moving data, the right tools can simplify workflows. They can reduce maintenance headaches and improve the quality of decisions.

But the sheer number of these tools makes it hard to choose one as they focus on different areas, have different pricing plans, and more.

That’s why in this blog, we break down:

- Different types of data engineering tools.

- 10 best data engineering tools to build pipelines.

- Benefits of using data engineering tools for businesses.

- The key factors to look for when picking data engineering tools.

Let’s get started.

Table of Contents

What are Data Engineering Tools?

Data engineering tools are the essential software and platforms that handle the management, transformation, and organization of your data throughout its lifecycle.

These tools, all put together, will help you to build up a reliable data infrastructure efficiently for supporting data-driven decision-making and analytics.

Check out the table for a quick overview of the types of data engineering tools:

| Data Migration Tools | ||||

| Tool | Top Features | Pros | Cons | Free Plan |

| Hevo Data | No-code150+ connectorsReal-time sync | Wide coverage Scales well | Limited transformations | ✅(1M events/mo) |

| Fivetran | 700+ connectorsIncremental sync | Wide coverageScales well | Expensive | ✅ |

| Data Warehouse Tools | ||||

| Snowflake | Cloud-nativeMulti-cloud | ScalableFast queriesEasy UI | Complex | ❌ |

| Amazon Redshift | AWS integrationAutomated backups | Strong security AWS ecosystem | Costly data transfers | ✅ |

| Google BigQuery | Serverless Real-time analytics | No infra Highly scalable | Surprise billing | ✅(On demand) |

| Data Transformation Tools | ||||

| Python | PandasPySpark ecosystem | Free Large community | Limited threading, slower on scale | ✅Open-source |

| SQL | In-database logic BI integration | AccuratePowerful queries | Hard for beginners | ✅Free |

| dbt | SQL modeling, Testing/docs | ModularTeam-friendly | Depends on warehouse performance | ✅Free |

| Data Visualization Tools | ||||

| Power BI | AI insights MS integration | Easy to use Strong ecosystem | Paid sharing | ❌Free trial |

| Tableau | Real-time data Drag-drop UI | Easy to use, Strong ecosystem | Lag with large datasets | ✅Free |

Hevo is a streamlined data integration platform that automates the ETL process, helping data engineers manage data pipelines efficiently. Key features include:

- Automated Data Integration: Connects to multiple data sources effortlessly.

- Real-Time Data Sync: Keeps analytics current with near real-time updates.

- No-Code Transformation: Simplifies data transformations without coding.

- Scalable Architecture: Grows with your data needs.

Hevo enhances data management efficiency, making it a powerful tool for modern data engineering. Join our 2000+ happy customers and make a wise choice for yourself.

Get Started with Hevo for FreeBest Data Migration Tools

The right data engineering tool helps businesses extract, manage, and visualize data with efficiency, speed, and accuracy. But an overabundance of data engineering tools makes it hard to know which one is the right choice.

Here are the top 10 data engineering tools you can choose from in 2025:

I. Best Data Migration Tools for Data Engineers

Data migration tools help engineers move data efficiently between systems without breaking pipelines or losing accuracy.

But not all data migration tools are built the same way. Here are a few of the best in the market now.

1. Hevo Data

Hevo Data is a no-code data migration tool built for modern teams that want speed and ease. With over 150 native connectors, it helps move data from diverse sources into your warehouse without any complex coding. Its automation-first design ensures smoother transfers, reduces manual errors, and saves time.

One of Hevo’s strong points is real-time data movement. Instead of waiting for batch loads, you can access updated data instantly. This feature helps teams that rely on time-sensitive insights to make fast, data-driven decisions.

Hevo also comes with built-in monitoring. This means you can track pipelines, spot issues quickly, and keep workflows running smoothly.

Top Features

- In-flight data transformation: Use Python to clean, format, and prepare data before loading it into the destination.

- Flexible data replication: Choose from full historical data sync, incremental data capture, etc., as you need.

- Security and compliance: Ensure security with data encryption at rest and in transit, access control, and compliance checks.

- Multi-tenant platform: Process large data sets using its multi-tenant platform for quick scaling up or down.

- Automated schema management: Adjust to schema changes on the go to prevent broken pipelines during transfers.

Pricing Model

Hevo provides transparent pricing that ensures no billing surprises, even as you scale.

It provides four pricing plans, which are:

- Free: For moving minimal amounts of data from SaaS tools. Provides up to 1M events/month.

- Standard: $ 239/Month – For moving limited amounts of data from SaaS tools and databases.

- Professional: $679/Month – For considerable data needs and higher control over data ingestion.

- Business Critical: You can customize it according to your requirements. For advanced data requirements like real-time data ingestion.

Learn more about our pricing plans.

Pros

- It is a zero-maintenance platform.

- It supports Change Data Capture.

- You can also load your Historical Data using Hevo.

Learn More: What is ETL? Guide to Extract, Transform, Load Your Data

2. Fivetran

Fivetran is built for enterprises that want fully automated ELT pipelines at scale. With over 700 connectors, it covers a vast range of data sources, from SaaS tools to complex databases. Its automated schema handling reduces maintenance and keeps pipelines consistent.

At the same time, Fivetran also shines in large-scale batch data movement instead of focusing on real-time sync. This makes it ideal for teams looking for wide data transfer coverage and automation.

Features

- Quick implementation: Implement Fivetran with a few clicks and start using it with minimum configuration.

- Enterprise-grade security: Keep data secure during migration with built-in compliance with SOC 2, GDPR, and HIPAA standards.

- Incremental Data Sync: Move new or updated records only as needed to save time and cost.

Pricing Model

You can start for free. Fivetran has 4 main pricing plans that are:

- Free

- Starter

- Standard

- Enterprise

Pros

- Set up, connect, and sync data sources with little manual effort.

- Pre-built connectors for databases, SaaS apps, and cloud services.

- Highly scalable to handle growing data volumes and data sources.

Cons

- Expensive with large volumes of data or many connectors.

- Not flexible for customized data transformations or integrations.

Learn More: Top 11 Fivetran Competitors & Alternatives (Free & Paid)

II. Top Data Warehousing Tools for Data Engineers

Data warehousing tools give engineers a central place to store and manage structured data from multiple sources. These tools also make it easier to run queries, scale storage, and support analytics.

Here are a few of the top data warehousing tools in the market in 2025.

1. Snowflake

Snowflake is a cloud-native data warehousing platform designed for speed, scale, and simplicity. It lets businesses unify structured and semi-structured data in one place while ensuring high performance. Teams can use it to easily query large datasets without worrying about infrastructure limits.

Snowflake offers flexibility for diverse workloads as it separates storage and computation. Its cloud-native design also means no hardware or complex setup is required while scaling instantly to meet any use case needs.

Features

- Native support for semi-structured data: Handle JSON, Avro, and Parquet without complex data transformations.

- Highly cloud-agnostic: Quickly deploy Snowflake on diverse cloud platforms, ranging from AWS and Azure to Google Cloud.

Secure data sharing: Ensure governed, real-time sharing of data as needed across teams, partners, and external stakeholders.

Pricing Model

Snowflake mainly consists of four pricing models. And based on the cloud platform where it’s deployed, the plans change in their offerings:

- Standard

- Enterprise

- Business Critical

- Virtual Private Snowflake

Snowflake charges a monthly fee for data stored on the platform. You can check out how Snowflake pricing works.

Pros

- You can easily scale it up or down to handle varying workloads.

- High-speed query processing by optimizing data processing.

- It has a simple, easy-to-use interface.

Cons

- Learning curve with advanced features and optimizing usage.

- Integrating Snowflake with legacy systems is complex.

Learn More: Snowflake Data Warehouse 101: A Comprehensive Guide

2. Amazon Redshift

Amazon Redshift is a cloud data warehouse built to handle large-scale analytics workloads. It is also designed for speed, reliability, and deep scalability. As it integrates with the AWS ecosystem, it is the best option for users of Amazon Web Services.

Teams run complex queries across huge datasets, thanks to Redshift’s MPP architecture. With automated backups and strong security, it also simplifies enterprise data ops management.

Features

- Automated backups and snapshots: Take continuous backups to Amazon S3 automatically with easy restoration options.

- Deep AWS Integration: Integrate seamlessly with S3, Glue, Athena, and other AWS tools for teams already using the AWS ecosystem.

- Columnar storage: Store data in columns instead of rows to improve query speed and reduce storage costs.

Pricing Model

You can get a free trial. However, it offers on-demand pricing. You can check out the pricing plans to learn more about it.

Pros

- Automates backups, scaling, and maintenance tasks to reduce costs.

- Robust data security to keep your data secure at rest and in transit.

- SQL-based querying and integration with BI and visualization tools.

Cons

- Optimizing queries and resources affects overall performance.

- Expensive to transfer large data sets to other services or regions.

Learn More: AWS Redshift: Features, Pricing & Best Practices

3. Google BigQuery

Google BigQuery is a fully managed, serverless data warehouse for quick data analytics. It is also ideal for businesses already in the GCP ecosystem. Its serverless architecture eliminates the need for infrastructure management. This means that teams can focus on analyzing data instead of maintaining hardware.

With its speed and ease of use, BigQuery handles enterprise-scale workloads effortlessly. The platform also supports streaming data ingestion and advanced analytics with real-time insights.

Features

- BigQuery BI engine: In-memory analysis to speed up dashboards and interactive reports for BI tools like Looker and Data Studio.

- Real-time analytics: Ingest and analyze streaming data for up-to-the-minute insights and faster decision making.

- Federated Queries: Query data directly from sources like Google Drive, Cloud Storage, or external databases without migration.

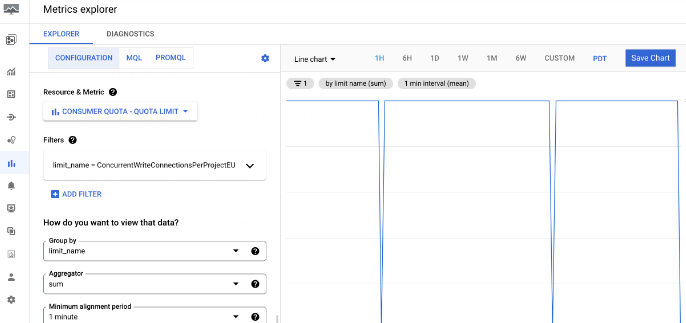

Google BigQuery’s dashboard will look like this:

Pricing Model

It offers on-demand pricing according to your data processing needs. Know more about BigQuery pricing.

Pros

- No infrastructure management is required.

- Google’s infrastructure and optimizations for faster query execution.

- Handle large datasets without requiring manual intervention.

Cons

- Steep learning curve to master advanced features.

- On-demand pricing is expensive with large or frequent queries.

III. Best Data Transformation Tools

1. Python

Python is one of the most popular open-source programming languages for data transformation. It is widely accepted by data engineers and analysts for its flexibility and rich ecosystem of libraries. Data engineers and analysts also rely on it to clean, reshape, and prepare data for analysis and downstream applications.

It comes with rich libraries like Pandas, NumPy, and PySpark. This enables Python to handle everything from basic data wrangling to large-scale processing.

Features

- Integration with other tools: Work smoothly with databases, cloud platforms, and ETL frameworks for diverse data-related functions.

- Highly flexible and scalable: Adapt Python as needed by teams for small scripts or enterprise-scale data pipelines.

- Extensive data cleaning: Handle missing values, duplicates, outliers, and inconsistent formats with ease using Python.

Pricing Model

Python is available at no cost and can be freely modified and distributed under the Python Software Foundation (PSF) License.

Pros

- Accessible to both beginning and experienced programmers.

- It supports a wide range of applications.

- Active community with extensive support and resources.

Cons

- Slower execution speed compared to languages like Java or C++

- The number of threads for execution in one process is limited

Learn More: Learn Data Analytics with Python: 4 Easy Steps

2. SQL

SQL is among the most widely used tools for data transformation. This is especially true for teams working directly within databases and warehouses. It allows teams to filter, join, aggregate, and reshape datasets quickly. As such, SQL has become an essential skill for data engineers.

As SQL runs natively where the data lives, it avoids unnecessary movement and processing overhead. This makes it ideal for transforming large datasets for reporting or analysis.

Features

- In-database processing: Transform data directly within the warehouse without external tools using SQL.

- Advanced query functions: Use advanced query functions, like joins, aggregations, and window functions, for complex reshaping.

- Strong BI Integration: Power dashboards and analytics tools by delivering clean, transformed data with SQL.

Pricing Model

SQL is a language, so there are no costs associated with it. Costs come from the database systems that implement SQL.

Pros

- Well-supported across various database management systems.

- Accurate and consistent data with constraints and transaction management.

- Complex queries and data manipulations with a single language.

Cons

- A steep learning curve for complex querying and database design.

- Not ideal for non-relational or NoSQL databases.

Learn More: The Role of SQL in Data Analytics: Tools, Queries, and Techniques

3. dbt

dbt (data build tool) is a modern transformation tool built for analytics engineering. It allows teams to write transformations in SQL and manage them like software code. It helps bring version control and collaboration into the transformation process.

dbt avoids data movement and leverages the warehouse’s power for transformations. As a result, dbt is highly efficient, scalable, and well-suited for teams working on ELT.

Feature

- SQL-based modeling: Build modular, reusable transformation logic using plain SQL for faster processes.

- Version control integration: Manage transformations with Git for collaboration and traceability.

- Testing and documentation: Add tests and auto-generate documentation to ensure data quality as needed.

Pricing Model

The core dbt tool is available for free under an open-source license.

Pros

- Easy-to-use, user-friendly UI to manage data transformations.

- Support team collaboration and version control.

- Modular approach to managing and building data models.

Cons

- It is text-based with limited graphical user interface options.

- It relies on the data warehouse for performance.

Learn More: Is Your dbt Cloud Too Expensive? Find a Better Alternative Here!

IV. Best Data Visualization Tools

Businesses that handle large amounts of data often struggle to utilize the data effectively for decision-making. Data visualization tools eliminate this issue by turning data into charts, dashboards, and visual reports that are easy to understand.

Here are a few of the best data visualization tools you can choose in 2025:

1. Microsoft Power BI

Microsoft Power BI is a data visualization platform for businesses of all sizes. The platform helps turn raw data into interactive, easy-to-understand dashboards and reports with diverse templates. It offers rich charts, graphs, etc., to help its users spot trends, track performance, and make smart decisions.

Designed for both analysts and business users, Power BI connects to hundreds of data sources. It is also easy to use as it allows drag-and-drop creation of visuals.

Features

- Interactive dashboards: Build dynamic visuals that respond to filters and user input to drive insights faster and as needed.

- Diverse visualizations: Ensure tailored reporting as preferred by teams with charts, maps, gauges, and custom visuals.

- AI-powered insights: Use built-in AI tools to detect trends, anomalies, and predictive patterns for faster decision making.

Pricing Model

It allows you to use a free trial. It mainly has three pricing plans:

- Power BI Embedded

- Power BI Pro

- Power BI Premium Per User

Know more about Power BI pricing plans.

Pros

- Beginner-friendly with intuitive UI with a drag-and-drop feature.

- Integrate with various data sources and Microsoft products.

- Offers numerous built-in or out-of-the-box visualizations.

Cons

- Advanced features and sharing need subscriptions.

- Large datasets or complex models may cause performance issues.

Learn More: Discover the 4 Best Microsoft Business Intelligence Platforms in 2025

2. Tableau

Tableau is a data visualization tool for transforming data into clear, interactive stories. It helps teams explore data, uncover trends, and communicate insights using compelling dashboards and charts. With its drag-and-drop interface, Tableau simplifies creating reports for technical and non-technical users.

The platform can connect with diverse data sources and offer real-time updates. It also offers collaborative analytics across diverse teams and departments.

Features

- Interactive dashboards: Create visuals that allow users to drill down and explore data dynamically for granular details.

- Real-time data connectivity: Connect to live sources to keep dashboards up to date to ensure decisions are based on the latest data.

- Advanced analytics integration: Incorporate R, Python, and statistical models for deeper insights and data-driven decision-making.

Pricing Model

Tableau’s pricing plans are mentioned below:

- Tableau

- Enterprise

- Tableau+

You can explore the custom pricing plans of Tableau to learn more.

Pros

- Friendly UI with drag-and-drop feature, accessible to all users

- Real-time data analysis and updates for timely insights.

- Collaborate with team members for work or review.

Cons

- Performance issues with very large datasets.

- Advanced features and customizations need training.

Learn More: How to Build Tableau Reports: a Complete Guide

Key Factors to Consider When Choosing Data Engineering Tools

There are several key considerations you should think about when deciding on the right data engineering tools for your business.

- Scalability: Ensure the tool can scale seamlessly with increasing data volumes, ensuring long-term growth without performance degradation.

- Integration: The tool should be capable of seamless integration into your data infrastructure and different systems without any disruption.

- Automation: Consider choosing tools that can automate repetitive tasks such as data ingestion, transformation, and error handling beforehand to enhance efficiency and lessen manual work.

- Security and compliance data: Check whether the tool meets industry standards for data security and is in line with regulations like GDPR and HIPAA.

Advantages of Using Data Engineering Tools

Now that you have an idea of the top 10 data engineering tools that are widely used, let us discuss some of the key advantages of using these engineering tools.

- Enhanced Data Processing: These tools enrich the quality of your data; therefore, well-organized data will facilitate more accurate analysis and reporting, leading you to make better decisions and planning.

- Cost Reduction: Data processing and storage optimization reduces not only the infrastructure cost but also operational costs for you. In particular, cloud-based tools have cost-friendly pricing.

- Increased Efficiency: Data engineering tools make the process of collecting, storing, and organizing data effortless, therefore reducing manual intervention and enhancing efficiency.

- Better Data Management: Using a data catalog with your data engineering tools can help organize and manage data assets more effectively, providing a single source of truth. You can use tools like AWS Glue Data Catalog.

Learn More: Why the Next Generation of Data Engineers Will Work With AI, Not Fight It?

Hevo Helps Transform Data with Zero Maintenance

Data engineering tools are pivotal in managing the vast and complex data landscapes of modern organizations. They streamline the process of collecting, transforming, and storing data. This is vital to ensure the data is accurate, accessible, and ready for analysis.

And whether you use tools for data warehousing, ETL processes, real-time data processing, or data integration, these solutions help drive efficient data operations and support data-driven decision-making.

However, you need to choose the right ETL tool as it is where your journey with data starts. That’s why it is essential to have a reliable, easy, and robust data migration tool in your tech stack.

This is where Hevo fits the bill perfectly with:

- A fully managed, no-code platform that allows quick setup of real-time and batch data pipelines.

- 150+ data sources and various cloud data warehouses for quick data migration and transformation.

- Automatic schema detection and mapping to cut manual efforts, reduce errors, and save time.

- Flexible data transformations using Python scripts and drag-and-drop tools for efficient data preparation.

- Efficient monitoring, error handling, and 24/7 support for reliable, cost-effective data migration.

Are you ready to join 2000+ teams that have reduced their ETL platform cost by 85%, like Thoughtspot?

Start with a 14-day free trial without a credit card or request a free demo now.

Conclusion

Data engineering tools are pivotal in managing the vast and complex data landscapes of modern organizations. They streamline the process of collecting, transforming, and storing data, ensuring it is accurate, accessible, and ready for analysis. Whether you’re using tools for data warehousing, ETL processes, real-time data processing, or data integration, these solutions help drive efficient data operations and support data-driven decision-making.

You can unlock valuable insights, enhance performance, and maintain a competitive edge by leveraging the right combination of tools. As technology evolves, staying updated with the latest tools and their capabilities is crucial for optimizing your data infrastructure and harnessing the full potential of your data assets.

FAQs

1. Do data engineers use ETL tools?

Yes, data engineers frequently use ETL (Extract, Transform, Load) tools as a key part of their work.

2. How is SQL used in data engineering?

SQL (Structured Query Language) is a fundamental tool in data engineering, used for a variety of critical tasks involving the management and manipulation of relational databases.

3. Is Tableau an ETL tool?

No, Tableau is not an ETL tool. Tableau is primarily a data visualization and business intelligence (BI) platform that focuses on creating interactive and insightful visualizations from data.