Easily move your data from Pardot To BigQuery to enhance your analytics capabilities. With Hevo’s intuitive pipeline setup, data flows in real-time—check out our 1-minute demo below to see the seamless integration in action!

Pardot is a top-rated marketing tool that helps businesses automate tasks such as email automation, targeting marketing campaigns, leading management projects, and more. It helps track customer behavior and therefore improves the digital marketing campaigns of businesses.

Companies can store all these marketing campaign data of Pardot in a centralized repository like Google BigQuery. The marketing campaign data can then be stored and analyzed in BigQuery with powerful BI tools such as Google Data Studio, PowerBI, Tableau, and more to gain meaningful insights. You can connect Pardot data with Google BigQuery using third-party ETL (Extract, Load, and Transform) tools and standard APIs.

This article will guide to connecting Pardot to BigQuery using different processes.

Table of Contents

What is Pardot?

Developed in 2007, Pardot is a Software as a Service marketing automation platform developed by Salesforce. It helps businesses in marketing and sales to create, manage, and implement online marketing campaigns that improve sales. Pardot allows marketers to identify their potential customers by speaking with the prospects at the right time and in the right manner.

Pardot assists businesses in tasks such as lead management, email automation, ROI tracking, targeted email campaigns, and more. Salesforce’s CRM (Customer Relationship Management) software synchronizes with Pardot to improve the performance of businesses. Users’ changes in Pardot can be easily reflected in Salesforce within 10 minutes. You need to configure the Pardot Lightning app to provide users access to Pardot. With the Lightning app, businesses can collaborate their sales and marketing teams on a single platform.

Key Features of Pardot

- Dynamic Content: The dynamic content feature in Pardot allows businesses to tailor content for each market segment. Businesses can design various iterations of forms, landing pages, emails, and more depending on user engagement.

- Salesforce User Sync: With the Salesforce user sync administration feature, businesses can manage their Pardot and Salesforce accounts simultaneously.

- B2B Marketing Analytics: Businesses use ROI metrics to calculate the effectiveness of marketing campaigns. The B2B Marketing analytics feature in Pardot combines sales and marketing data to provide information about the performance of businesses.

What is Google BigQuery?

Developed in 2010, Google BigQuery is a trendy and highly scalable data warehouse that does not require any administration. It consists of a BI engine that can easily store and analyze petabytes of data quickly. BigQuery uses standard SQL queries to analyze and obtain answers from a colossal amount of data. BigQuery includes columnar storage, which assists businesses in providing high performance and high data compression capabilities.

BigQuery enables developers and data scientists to work with several programming languages like C. C++, Java, Python, Go, JavaScript, and more. They can also leverage BigQuery API for transforming and managing data effectively.

Key Features of Google BigQuery

- BigQuery BI Engine: BigQuery consists of a BI engine that assists enterprises in processing large volumes of data with sub-second response time and high concurrency.

- Machine Learning: Google BigQuery provides an opportunity for businesses to create machine learning models using standard SQL queries. Using SQL queries, enterprises can build machine learning models such as Linear Regression, Multi-class Regression, K-means Clustering, Binary Logistic Regression, and more.

- User Friendly: Storing and analyzing data in BigQuery is a straightforward process. BigQuery has an intuitive user interface that allows organizations to set up a cloud data warehouse without installing clusters and efficiently choosing storage sizes and encryption settings.

Why Integrate Pardot to BigQuery?

- Centralize all customer data and interactions from Pardot for a comprehensive view.

- Analyze Pardot data alongside user behavior and product demand to better understand customers and revenue streams.

- Eliminate manual work by integrating Pardot data with BigQuery for seamless data management.

- Combine Pardot data with customer, marketing, and service data in BigQuery to uncover valuable business insights.

- Leverage these insights to identify better business opportunities and enhance decision-making.

Connecting Pardot to BigQuery

Method 1: Using Hevo to Set Up Pardot to BigQuery

Hevo makes migrating your data from Pardot to BigQuery simple, preparing it for better analytics. Effortlessly automate data ingestion from over 150 sources, including Pardot, without writing a single line of code.

Method 2: Using Custom Code to Move Data from Pardot to BigQuery

In this method, you manually connect and manage data movement with CSV files. However, this method takes up more time and requires technical expertise when dealing with standard APIs.

Method 1: Using Hevo to Set Up Pardot to BigQuery

Using Hevo Pardot to BigQuery Migration can be done in the following 2 steps:

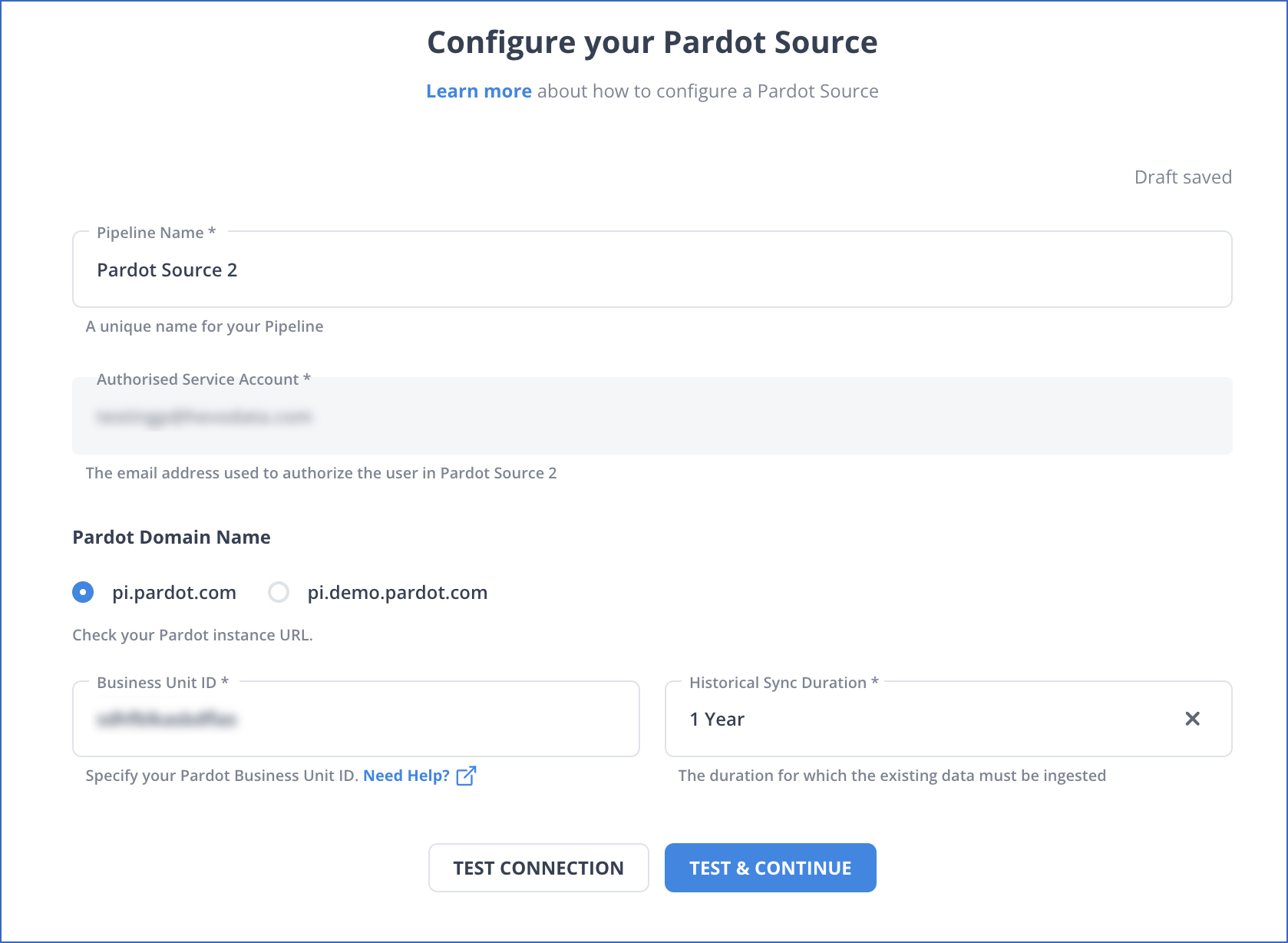

- Step 1: Select PIPELINES → + CREATE → Pardot. Add your Account and select your Salesforce Environment, i.e., Production or Sandbox. Next, you will be redirected to Salesforce’s login page. Once logged in, fill in the details as shown in the screenshot below:

Click on TEST & CONTINUE to proceed to the next step.

| Note: I have used a Production Account to connect Hevo to Salesforce Pardot. For additional details, visit our Pardot documentation page. |

- Step 2: Select BigQuery from the Destinations page and complete your required details. Next, click on SAVE & CONTINUE.

And your pipeline is now set!

Method 2: Using Custom Code to Move Data from Pardot to BigQuery

This method of connecting Pardot to BigQuery uses a more technical approach. Pardot helps businesses improve their digital marketing campaigns by automating tasks such as email marketing, leading management projects, tracking customer behavior, and more. Companies can store all this Pardot marketing campaign data in a data warehouse like BigQuery, which can be used for in-depth analysis. Google BigQuery uses powerful BI tools to help businesses make better data-driven decisions.

Exporting Pardot Data

It is assumed that you have signed in to the Pardot account. Follow the below steps to export Pardot data.

- Navigate to the:

Lightening: Prospects > Pardot Prospects

Classic: Prospects > Prospects List

- Click Tools and then select the CSV Export option.

- Give the name to the Export.

- Select the Export type as Express or Full. It is recommended to select the Express Export Type as you have lots of prospects.

- Click on Export.

- Once your export is ready, you will receive an email.

- For your marketing assets and sync errors, go to them and follow the same steps above.

For example, if you want to export prospect data from sent emails, follow the below steps:

- Navigate to Pardot Email > Sent Emails > Tools.

- To export sync errors navigate to Pardot Settings > Connectors > Click on the CRM cog > Sync Errors.

Importing Data into BigQuery

With BigQuery, you can append your CSV file for overwriting an existing table or position in BigQuery.

The file is loaded into the BigQuery table and converted into columnar format or Capacitor.

Add the below required IAM permissions before loading the CSV file into BigQuery.

- Permissions to load data into BigQuery

bigquery.tables.create

bigquery.tables.updateData

bigquery.tables.update

bigquery.jobs.create- Each predefined IAM role includes the below permission.

roles/bigquery.dataEditor

roles/bigquery.dataOwner

roles/bigquery.admin (includes the bigquery.jobs.create permission)

bigquery.user (includes the bigquery.jobs.create permission)

bigquery.jobUser (includes the bigquery.jobs.create permission)- Permissions for loading data from the cloud storage

Storage.objects.get

Storage.objects.list- The below prerequisites are needed to load the CSV file into a BigQuery table.

- The Cloud Console.

- The bq command-line tool’s bq load command.

- Calling the jobs.insert API method.

- Client libraries.

Follow the following steps to load the CSV file into the BigQuery table.

- Navigate to the BigQuery on the Cloud Console.

- Open your project with the Explorer pane and then select the database.

- Click on the Create Table option in the Dataset Info section.

- Navigate to the Create table panel and enter the details. Choose Google Cloud Storage in the Source section. Create the table from the list and follow the instructions below.

- Select the file from the Google Cloud Storage bucket or enter the Cloud Storage URI.

- Select the CSV format.

Mention the below details in the Destination section.

- Select the database to create the table in BigQuery.

- You need to enter the table’s name in the Table field.

- Check that the table is set to the Native table.

- Enter the Schema definition in the Schema definition. Select the Auto-detect option for enabling auto-detection for Schema. Enter the below Schema definition using any of the following ways.

- Click on the Edit as Text and paste the Schema in the JSON arrays. You can generate the Schema by using the same process as ‘creating a JSON schema file’ when using JSON arrays.

The Schema of the existing table is viewed in the JSON format using the below command.

bq show --format=prettyjson dataset.table- Click on the Add field to add the table Schema. You can also add the field’s name, type, and mode.

To create the table in BigQuery, you have to click on Advanced Options and follow the next instructions.

Limitations of Manually Connecting Pardot to BigQuery

Businesses can connect Pardot to BigQuery using standard APIs or manual processes. With the manual processes, businesses can easily export data from Pardot data to BigQuery. Although manual processes are manageable, you cannot work with real-time data. And in the case of standard APIs, you require a strong technical team to connect Pardot to BigQuery. As a result, to eliminate such issues, businesses can connect Pardot to BigQuery using third-party ETL tools like Hevo for automating pipelines between Pardot and Google BigQuery.

Learn More:

Conclusion

In this article, you learned about connecting Pardot to BigQuery. Pardot helps businesses route leads to sales, create automated marketing campaigns, analyze prospect activity, and more. Companies can integrate this Pardot to BigQuery, where powerful BI tools can be used to gain meaningful insights and help businesses make better decisions.

FAQ on Pardot to BigQuery Integration

How can I connect to BigQuery?

You can connect to bigquery using these three methods:

– Using Google Cloud Console

– Using BigQuery API

– Using Third-Party Tools like Hevo.

How to connect BigQuery to Salesforce?

– You can connect BigQuery to Salesforce using the following methods:

– Using Google Data Connector for Salesforce

– Using automated platforms like Hevo.

Does Pardot integrate with Google Analytics?

Yes. Pardot can integrate with Google Analytics. Pardot provides tracking scripts that you can embed on your website, which can then send data to Google Analytics.