Key Takeaways

Key Takeaways- ELT tools load raw data into your cloud data warehouse first and transform it there, making it faster, more scalable, and more flexible than traditional ETL pipelines.

- The best ELT tools for 2026 include Hevo Data, Fivetran, Airbyte, Informatica, Matillion, and Talend, each suited to different team sizes, budgets, and technical proficiencies.

- Key factors to evaluate when choosing an ELT platform include connector availability, transformation support, pricing model, ease of use, and compliance standards.

- No-code ELT platforms like Hevo Data and Skyvia are ideal for teams that want to move data without heavy engineering effort, while tools like Airbyte and Matillion offer deeper customization for technical teams.

Real-time data pipeline capabilities, automated schema management, and built-in monitoring are must-have features for production-grade ELT workflows.

Modern analytics stacks depend on scalable data pipelines that can handle high-volume ingestion, schema changes, near real-time syncs, and cloud-native transformations without constant engineering maintenance. As data volumes increase across SaaS platforms, databases, event streams, and APIs, teams need ELT tools that simplify data management while maintaining pipeline reliability and performance.

The right ELT platform helps organizations centralize raw data in cloud warehouses, automate transformation workflows, and reduce operational overhead across the entire data pipeline. Features such as automated schema evolution, CDC support, pipeline observability, orchestration, and transformation compatibility now play a major role in platform selection.

However, not every ELT tool fits every architecture. Some platforms focus on no-code pipeline automation and rapid deployment, while others provide deeper customization, open-source flexibility, or enterprise-grade governance capabilities.

Here’s what this blog will cover:

- A curated list of 10 best ELT tools to watch for in 2026 and more

- The difference between ETL and ELT platforms

- What factors matter while picking the right ELT solution

Table of Contents

Quick Summary of the Top ELT Tools

| ||||||

| Reviews |  4.5 (250+ reviews) |  4.2 (400+ reviews) |  4.5 (50+ reviews) |  4.4 (80+ reviews) |  4.4 (80+ reviews) |  4.3 (100+ reviews) |

| Pricing | Usage-based pricing | MAR-based pricing | Volume/capacity-based pricing | consumption-based pricing | Consumption-based pricing | Capacity-based pricing |

| Free Plan |  |  |  Open source |  |  | |

| Free Trial |  14-day free trial |  14-day free trial |  14-day free trial |  30 day free trial |  14-day free trial | |

| UI Type | No-code | No-code | Low-code + CLI | Low-code GUI | Low-code GUI | Low-code + code |

| Connector Library | 150+ sources | 700+ sources | 550+ sources | 100+ connectors | 100+ connectors | 900+ components |

| Custom Connectors | Available via SDK | Limited | Extensive support  | Limited | Available (Python) | Available |

| Data Destinations | Warehouses, DBs, SaaS | Warehouses, DBs | Warehouses, DBs | Broad, incl. legacy | Cloud warehouses | Cloud, on-prem, hybrid |

| Transformation Support | Pre & Post-load (dbt) | Post-load (dbt Cloud) | Post-load (dbt/custom) | Pre & Post-load | SQL in-warehouse | Pre & Post-load |

| Open Source |  |  | ||||

| Ease of Use | Very easy  | Easy setup  | Tech skills needed | Steep learning | Moderate learning | Steep for beginners |

| Data Volume Handling | Real-time capable  | High volume  | Cloud optimized  | Enterprise-grade  | Scalable  | Scalable  |

| Support Model | 24x7 live support | Tiered (chat/email) | Community + paid tiers | Enterprise support | Enterprise support | Enterprise + community |

Looking for the best ELT tools to connect your data sources? Rest assured, Hevo’s no-code platform helps streamline your ETL process. Try Hevo and equip your team to:

- Integrate data from 150+ sources(60+ free sources).

- Utilize drag-and-drop and custom Python script features to transform your data.

- Risk management and security framework for cloud-based systems with SOC2 Compliance.

Try Hevo and discover why 2000+ customers have chosen Hevo to upgrade to a modern data stack.

Get Started with Hevo for Free10 Best ELT Tools for 2026

1. Hevo Data

- G2 rating: 4.4/5(276)

- Gartner rating: 4.4(3)

- Capterra rating: 4.7(110)

Hevo Data follows a no-code approach to ELT. Unlike traditional ETL tools that require complex setup and maintenance, Hevo is fully automated, allowing you to integrate data from 150+ sources into your preferred data warehouse with minimal effort. Whether you’re working with SaaS apps like Salesforce, databases like PostgreSQL, or even real-time event streams, Hevo ensures zero data loss and minimal latency.

One of the biggest pain points in data engineering is managing schema changes and pipeline failures. With Hevo, schema drift is automatically handled, and errors are flagged with detailed logs, so you don’t have to spend hours troubleshooting. The tool also provides real-time monitoring and alerts, giving you full visibility into your data pipelines.

Another area where Hevo excels is its built-in data transformation layer. While many ELT tools leave transformation entirely to the warehouse, Hevo lets you pre-process data before loading, ensuring it’s structured and cleaned for analysis. This means you can run filtering, enrichment, or even custom SQL transformations before the data lands in your warehouse.

Key features

- No-Code, Fully Managed: Set up pipelines without writing a single line of code.

- 150+ Pre-Built Connectors: Covers databases, cloud storage, SaaS apps, and more.

- Real-Time Data Streaming: Ensures fresh data is always available.

- Automated Schema Management: No need to manually adjust schema changes.

- Data Transformation & Quality Checks: Helps clean and prep data before it reaches your warehouse.

- Scalability & Reliability: Handles millions of events effortlessly.

Pros

- Super easy to use: No-code setup makes it beginner-friendly.

- Reliable & real-time: Data moves without delays or failures.

- Minimal maintenance: Automated handling of schema changes and errors.

- Scalable: Handles high data volumes smoothly.

- Great support: Responsive customer service and a helpful knowledge base.

Cons

- Limited on-premise support: Primarily built for cloud-based integrations.

- Custom transformations require SQL knowledge: While it offers built-in transformations, advanced users might want more flexibility.

Pricing

Hevo offers a free tier with 1 million events per month, perfect for small-scale use or testing. You can go through Hevo’s pricing plan to understand which plan best suits your organization.

What Do People Think About Hevo Data?

Final Verdict

If you want a no-fuss, reliable, and scalable ELT tool, Hevo Data is one of the best choices out there. It takes care of the heavy lifting, letting you focus on analyzing data rather than managing pipelines. It’s especially great for teams that don’t want to spend hours debugging or maintaining ETL scripts. I’d highly recommend it for businesses looking for a simple yet powerful ELT solution.

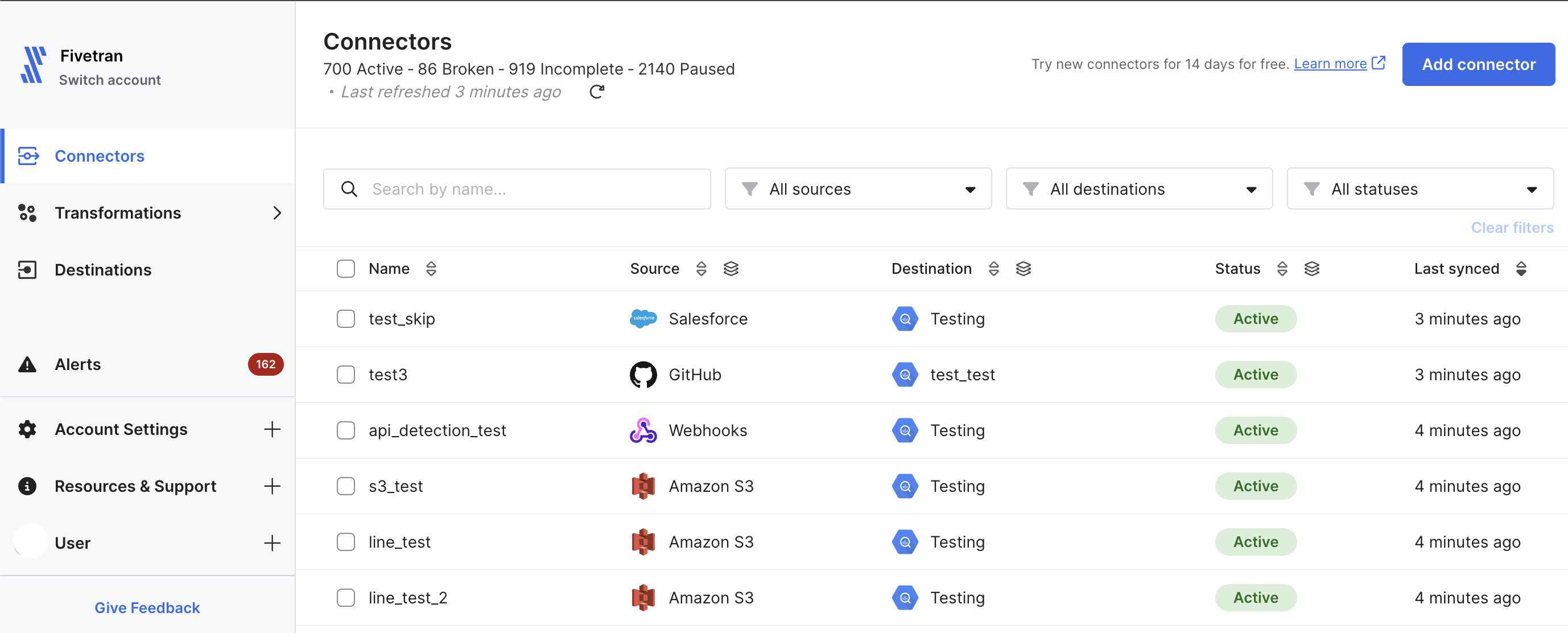

2. Fivetran

G2 Rating: 4.2/5 (447)

I’ve worked with Fivetran on multiple projects, and if there’s one thing I can say about it, it’s “set it and forget it.” Fivetran is a fully managed, enterprise-grade ELT tool designed to make data integration as hands-off as possible. Once you set up a pipeline, it automatically syncs data from your sources to your warehouse, handling schema changes, failures, and updates with minimal intervention.

Fivetran supports a massive library of connectors (700+), making it one of the best options for businesses with diverse data sources. The platform is highly reliable, offering incremental updates so that only new or modified data is loaded, which helps reduce warehouse costs.

However, while Fivetran is incredibly powerful, it’s also one of the more expensive options on the market. The pricing is based on Monthly Active Rows (MAR), meaning costs can quickly escalate as your data scales. That said, for businesses that need a robust, maintenance-free ELT solution, Fivetran delivers on its promise.

Key Features

- 700+ Pre-Built Connectors: Extensive support for databases, SaaS apps, and cloud storage.

- Automated Schema Management: Adapts to changes in source schemas without breaking pipelines.

- Incremental Data Syncs: Reduces data processing costs by syncing only new or updated records.

- Security & Compliance: SOC 2 Type II, GDPR, and HIPAA compliant.

- Transformations with dbt Integration: Supports post-load transformations using SQL and dbt.

- Enterprise-Grade Reliability: Offers 99.9% uptime with automatic failure recovery.

Pros

- Extremely reliable: Pipelines rarely break, and schema changes are auto-handled.

- Massive connector library: One of the most comprehensive in the industry.

- Low maintenance: Once set up, it requires almost zero manual intervention.

- Scalable: Can handle high data volumes effortlessly.

- Strong security features: Ideal for businesses with strict compliance requirements.

Cons

- Expensive pricing model: Costs scale with Monthly Active Rows (MAR), which can add up quickly.

- Limited customizability: Minimal control over data extraction logic.

- No free plan: Only a 14-day trial, unlike some competitors offering free tiers.

Pricing

Fivetran follows a Monthly Active Rows (MAR) pricing model, meaning you pay based on how many unique rows are updated or inserted each month. There’s no free plan, but they do offer a 14-day free trial to test the platform. Pricing can be steep, especially for businesses with large datasets that change frequently.

What Do People Think About Fivetran?

Final Verdict

If you’re looking for a highly reliable, low-maintenance ELT solution, Fivetran is one of the best choices out there. It’s particularly well-suited for enterprise-level businesses that need a fully automated, hands-off approach to data integration. However, if you’re working with a limited budget or need fine-grained control over your pipelines, the cost and lack of customizability might be a dealbreaker. That said, if budget isn’t a concern, Fivetran is as close to a “set it and forget it” ELT tool as you can get.

3. Portable

G2 Rating: 4.9/5 (26)

I came across Portable when I needed an ELT tool that could handle long-tail SaaS connectors; those niche applications that most mainstream ELT tools don’t support. Unlike Hevo or Fivetran, which focus on widely used connectors, Portable specializes in custom-built data pipelines for hard-to-find integrations.

The best part? You don’t need to build or maintain these connectors yourself. If you need a connector that isn’t already in their library, Portable will build it for you at no extra cost. That’s a game-changer for businesses dealing with industry-specific tools that don’t have ready-made ELT solutions.

However, while Portable shines in connector coverage, it’s not as feature-rich as some of the bigger players. It doesn’t offer native transformations, so you’ll have to handle all data processing within your warehouse. Also, its real-time sync capabilities are limited compared to Hevo or Fivetran.

Key features

- Custom Connectors on Demand: If a connector doesn’t exist, Portable builds it for free.

- Long-Tail SaaS Support: Covers 500+ niche SaaS applications, many of which are unsupported elsewhere.

- Fully Managed Pipelines: No need to write or maintain API integrations.

- Warehouse-Agnostic: Works with Snowflake, BigQuery, Redshift, and others.

- Automated Schema Updates: Adjusts to source schema changes without breaking pipelines.

Pros

- Best for niche SaaS integrations: Covers connectors most other ELT tools ignore.

- No engineering effort required: Portable builds and maintains connectors for you.

- Simple pricing: No MAR-based model; pricing is transparent and predictable.

- Good customer support: They actively work with users to develop new connectors.

Cons

- No built-in transformations: All transformations need to be handled in the warehouse.

- Limited real-time capabilities: Sync frequencies may not be as fast as competitors.

- Smaller ecosystem: Lacks some of the enterprise-grade features of bigger tools like Fivetran.

What do People Think About Portable?

Pricing

Portable offers four pricing tiers: the Starter plan at $290/month includes one scheduled data flow with daily syncs; the Scale plan at $1,790/month offers ten data flows with 15-minute syncs; the Pro plan at $2,490/month provides 25 data flows with 5-minute syncs; and the Enterprise plan offers custom pricing for over 25 data flows with near real-time syncs. All plans feature unlimited data volumes.

Final verdict

If your data stack includes niche SaaS applications that other ELT tools don’t support, Portable is a lifesaver. The ability to request new connectors at no additional cost is a huge win for businesses struggling with custom integrations. However, if you need real-time syncs or built-in transformations, you might find Portable a bit limited compared to Hevo or Fivetran.

4. Stitch

G2 rating: 4.4/5 (68)

When I first explored Stitch, I was drawn to its simplicity and focus on seamless data integration. It’s a cloud-based ELT platform that automates data extraction, loading, and replication across various sources and destinations. Stitch is built for businesses that want to move data into their warehouse without managing complex pipelines. While it doesn’t offer advanced transformation capabilities out of the box, it integrates well with tools like dbt for post-load transformations.

Key features

- Prebuilt Connectors – Stitch supports over 130+ data sources, including databases, SaaS apps, and cloud storage.

- Automated Replication – Users can schedule syncs as frequently as every five minutes, ensuring near real-time updates.

- Scalability – Handles increasing data volumes efficiently, making it ideal for growing businesses.

- Security & Compliance – SOC 2 and HIPAA compliance ensure data security and regulatory adherence.

- Integration with dbt – While Stitch itself doesn’t perform complex transformations, it works well with dbt for post-load processing.

Pros

- Easy to Set Up – The no-code interface makes data pipeline setup quick and hassle-free.

- Wide Connector Support – Works with most popular data sources without requiring custom integrations.

- Flexible Pricing – Offers usage-based pricing, which can be cost-effective for smaller teams.

Cons

- Limited Transformations – Requires external tools for complex data transformation.

- Costs Increase with Usage – Pricing can rise significantly for high data volumes.

- Support Limitations – Users on standard plans may experience delays in customer support.

Pricing

Stitch’s Standard plan starts at $100 per month, covering 5 million rows of data with basic connectors. Costs increase with more data volume and premium connectors.

What do People Think About Stitch?

Final verdict

In my experience, Stitch is a solid choice for businesses that need a simple, reliable data pipeline solution without the complexity of managing ETL infrastructure. Its automation and prebuilt connectors make it a great fit for mid-sized businesses and teams looking for a hassle-free data movement tool. However, if your use case requires extensive transformations or predictable pricing, you might need to consider alternatives.

5. Estuary

G2 rating: 4.8/5 (31)

In my experience, Estuary stands out as a cutting-edge real-time data integration platform that simplifies the creation and management of data pipelines. Designed to handle both batch and streaming data, Estuary enables the building of robust ETL workflows. Its intuitive user interface makes it accessible for both technical and non-technical users, allowing teams to focus on deriving value from their data rather than grappling with complex configurations.

Key features

- Real-Time Data Integration: Estuary’s platform is purpose-built for real-time ETL and ELT data pipelines, enabling batch loading for analytics and streaming for operations and AI with millisecond latency.

- Extensive Connector Library: With support for over 75 data source connectors, Estuary facilitates seamless integration with various databases, SaaS applications, and cloud storage services.

- User-Friendly Interface: The platform’s intuitive design reduces the learning curve, making it accessible to users with varying technical expertise.

- Scalability: Estuary’s cloud-native architecture ensures efficient handling of increasing data volumes, accommodating the growth of your business.

- Security and Compliance: The platform offers enterprise-grade security features, ensuring that data is handled securely throughout the ETL process.

Pros

- Time Efficiency: Estuary saves development teams time by eliminating the need to learn the intricacies of multiple data destinations, allowing them to focus on core tasks.

- Comprehensive Support: Users have reported that Estuary provides fantastic support, enhancing the overall user experience.

- Flexible Deployment Options: Estuary offers both open-source and cloud deployment options, catering to different organizational needs and preferences.

Cons

- Pricing Considerations: The cost structure may be high for smaller organizations, potentially impacting budget allocations.

- Limited On-Premises Deployment: Estuary has limited on-premises deployment options, which may not align with the requirements of organizations with specific infrastructure preferences.

Pricing

Estuary offers a pay-as-you-go pricing model, charging $0.50 per GB of data moved each month, with the first six connector instances priced at $100 per month per connector instance, and additional connectors at $50 per month each (prorated). A free trial is available, providing new users with 30 days of access to all features, with no credit card required to start.

What do People Think About Estuary?

Final verdict

Estuary presents a robust solution for organizations seeking real-time data integration with minimal complexity. Its extensive connector library, user-friendly interface, and flexible deployment options make it a valuable tool for streamlining data workflows. However, potential users should carefully assess the pricing structure and deployment limitations to ensure alignment with their organizational needs.

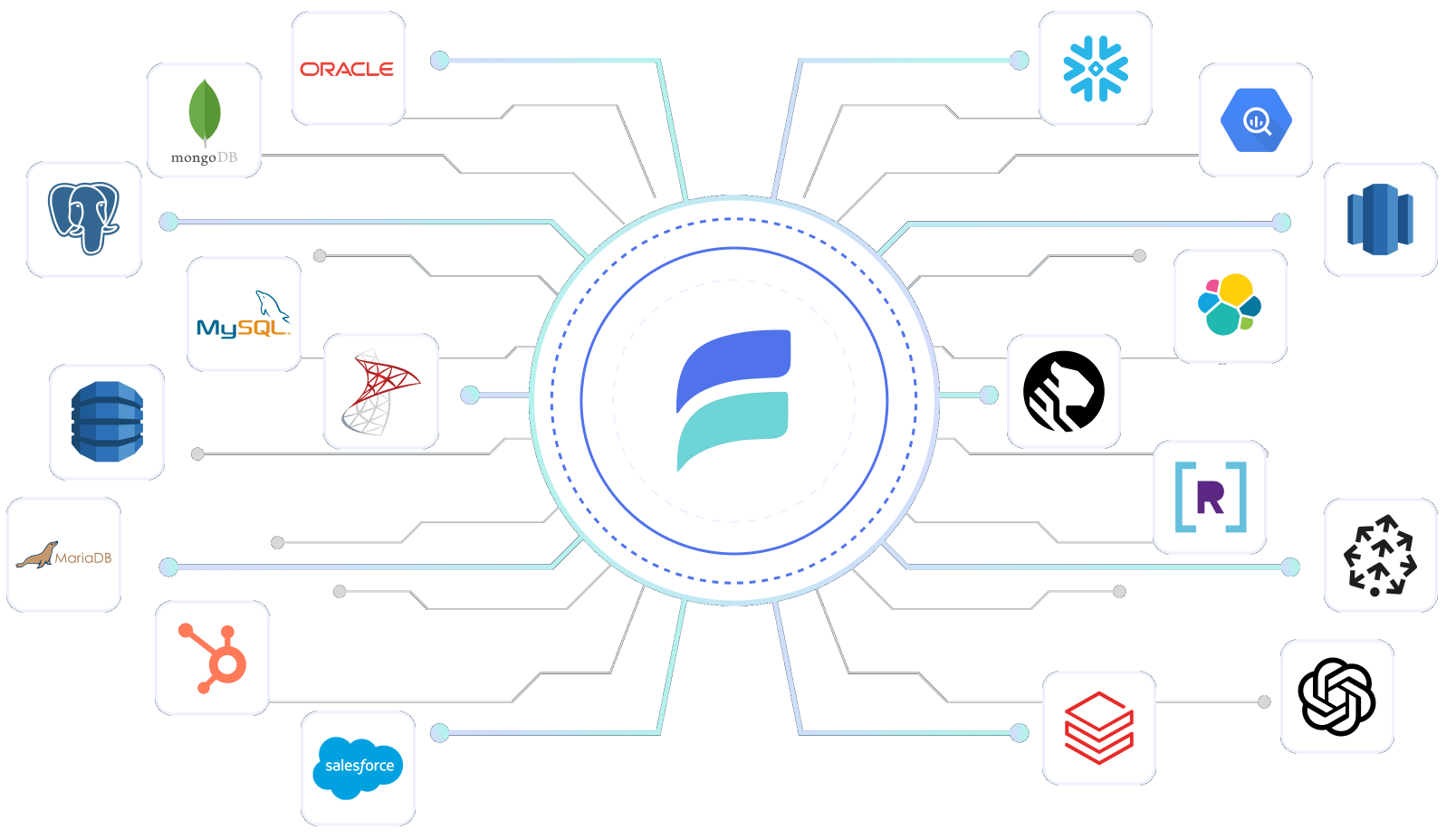

6. Airbyte

G2 Rating: 4.5/5(75)

Gartner Rating: 4.6(66)

From my experience, Airbyte is one of the most flexible ELT solutions available, especially for companies that want full control over their data integration processes. As an open-source platform, it allows users to move data from various sources to destinations like data warehouses, lakes, and databases. What sets Airbyte apart is its rapidly expanding connector library and its ability to let users build their own connectors with ease.

Unlike fully managed solutions like Fivetran, Airbyte offers a self-hosted version, giving engineering teams the ability to customize and optimize pipelines to their needs. At the same time, Airbyte Cloud provides a managed alternative for those who prefer a hassle-free experience. One thing I appreciate about Airbyte is its commitment to open-source ETL tools collaboration, with a highly active community constantly improving connectors and fixing issues.

Key features

- Extensive Connector Library: Airbyte offers over 550 pre-built connectors, facilitating seamless integration with a wide array of data sources and destinations.

- Custom Connector Development: The platform’s open-source nature allows for the creation of custom connectors, providing flexibility to address unique data integration needs.

- User-Friendly Interface: Airbyte’s intuitive design simplifies the setup and management of data pipelines, making it accessible to users with varying technical expertise.

- Real-Time Data Replication: The platform supports both batch and real-time data replication, ensuring that data remains current across systems.

- Extensibility: Airbyte’s open-source nature allows for extensive customization and integration into existing workflows.

Pros

- Cost-Effective: Airbyte’s open-source model eliminates licensing fees, making it a budget-friendly choice for organizations.

- Flexibility: The ability to create custom connectors and transformations caters to specific business requirements.

- Active Community Support: A vibrant community contributes to continuous improvement and provides assistance through forums and shared resources.

Cons

- Technical Expertise Required: Setting up and maintaining Airbyte may require significant technical knowledge, potentially posing challenges for non-technical users.

- Stability Concerns: Some users have reported issues with certain connectors, indicating that the platform may still be maturing in terms of stability.

- Administration Complexity: Managing and configuring Airbyte can be complex, necessitating a learning curve for effective administration.

Pricing

Airbyte offers a free open-source version that can be deployed on your own infrastructure. For those preferring a managed solution, Airbyte Cloud operates on a volume-based pricing model, with a 14-day free trial available. Additionally, Team and Enterprise plans are capacity-based, providing scalable options to suit various organizational needs.

What do People Think About Airbyte?

Final verdict

Airbyte stands out as a flexible and cost-effective data integration solution, particularly suitable for organizations with the technical resources to manage and customize the platform. Its extensive connector library and active community support are significant advantages. However, the need for technical expertise and potential stability issues with certain connectors should be considered when evaluating Airbyte for your data integration needs.

7. Matillion

G2 Rating: 4.4/5 (81)

Matillion is one of the most powerful ELT software I’ve worked with, especially for cloud data warehouses like Snowflake, Redshift, and BigQuery. Unlike fully managed ELT solutions that focus on ease of use, Matillion takes a more developer-friendly approach, offering a visual, low-code environment for building complex transformation workflows. It’s designed to work directly within your data warehouse, ensuring that transformations happen at scale without unnecessary data movement.

One thing I really appreciate about Matillion is its orchestration capabilities. It allows me to build sophisticated workflows with dependencies, scheduling, and conditional logic, which is perfect for handling large-scale enterprise data pipelines. The UI is drag-and-drop, which makes development much faster compared to writing SQL or Python scripts manually.

Key features

- Low-Code ELT: Drag-and-drop interface to build data transformation pipelines without heavy coding.

- Native Cloud Integration: Works seamlessly with Snowflake, Redshift, BigQuery, and Azure Synapse.

- Orchestration & Automation: Allows scheduling, dependencies, and conditional workflows.

- Scalable Transformations: Pushes processing to the cloud warehouse for high performance.

- Advanced Customization: Supports Python, SQL, and Bash scripting for complex transformations.

Pros

- Intuitive workflow design: Easy-to-use UI for data engineers and analysts.

- Strong cloud-native capabilities: Optimized for modern cloud data warehouses.

- Robust orchestration tools: Automates data movement and transformations effectively.

- Flexible customization: Supports scripting for advanced transformations.

Cons

- Requires a cloud data warehouse: No support for on-prem databases.

- Pricing can get expensive: Usage-based pricing may not be cost-effective for smaller teams.

- Limited free-tier: Trial version has restrictions that may not be enough for thorough testing.

Pricing

Matillion follows a usage-based pricing model, billed hourly based on the instance size. They offer a 14-day free trial, but beyond that, pricing varies depending on cloud provider and resource consumption. Larger enterprises may need a custom pricing plan for extensive usage.

What do People Think About Matillion?

Final verdict

If your data infrastructure is fully in the cloud and you need a powerful, flexible ELT tool, Matillion is an excellent choice. Its visual pipeline builder, deep cloud integration, and robust orchestration features make it one of the best options for enterprise-scale transformations. However, if you’re working with on-premise databases or looking for a budget-friendly solution, Matillion may not be the best fit.

8. Skyvia

G2 Rating: 4.8/5 (291)

Skyvia is a cloud-based data integration platform that I’ve found to be particularly useful for teams that need a simple, no-code solution for ELT. Unlike some of the more complex tools I’ve worked with, Skyvia prioritizes ease of use, making it a great option for businesses that want to move data without heavy engineering effort. It offers ELT, ETL, and data backup solutions, but its real strength lies in its ability to connect cloud applications and databases seamlessly.

One of the things I really like about Skyvia is its intuitive, web-based UI. It doesn’t require coding, which means even non-technical users can set up integrations between CRMs, marketing tools, and data warehouses. The platform supports a wide range of connectors, including Salesforce, HubSpot, MySQL, PostgreSQL, Google BigQuery, and more. I also appreciate the data replication feature, which makes it easy to sync data across different platforms in real-time.

Key features

- No-Code Data Integration: Drag-and-drop interface to move data between apps, databases, and warehouses.

- Wide Connector Support: Integrates with Salesforce, HubSpot, Google BigQuery, MySQL, and more.

- Data Replication: Enables real-time data syncing across platforms.

- Automated Workflows: Supports scheduled data transfers and backups.

- Cloud-Based: Fully hosted solution with no infrastructure maintenance required.

Pros

- Beginner-friendly UI: No coding required, easy to set up.

- Affordable pricing: Free tier available, cost-effective for small teams.

- Good selection of connectors: Covers most cloud apps and databases.

- Data backup and recovery: Helps with disaster recovery and compliance.

Cons

- Limited transformation capabilities: Lacks advanced data processing options.

- Performance issues with large datasets: Not ideal for high-scale enterprise use.

- No real-time streaming: Works best for scheduled or batch data loads.

Pricing

Skyvia offers a free plan for basic data integrations, making it one of the most accessible ELT tools. Paid plans start at $79 per month for higher data volumes and additional features, with custom pricing available for enterprise users.

What do Poeple Think About Skyvia?

Final verdict

If you’re looking for an easy-to-use, cost-effective ELT solution for moving data between cloud apps and databases, Skyvia is a great choice. It’s not built for complex transformations or massive enterprise workloads, but for small to mid-sized businesses that need a straightforward, no-code data integration tool, it gets the job done.

See a detailed list of Skyvia alternatives

9. Talend

G2 rating: 4.3 (67)

Talend is one of the most powerful and versatile data integration platforms I’ve used. It stands out because it’s not just an ELT tool; it’s a full-fledged data management suite that supports data integration, quality, governance, and even application and API integration. Whether you need a simple ELT pipeline or a more complex data processing workflow, Talend provides a robust solution with plenty of flexibility.

A key milestone in Talend’s journey was its acquisition by Qlik in 2023. One thing I particularly like about Talend is its open-source roots. Talend Open Studio, the free version, is a great entry point for developers who want to build and manage data pipelines without committing to a paid plan right away. The enterprise version, Talend Data Fabric, goes much further by offering cloud-native capabilities, advanced security, and AI-powered data quality checks. It’s particularly useful for large organizations that need a scalable and secure solution.

Key features

- End-to-End Data Management: Covers ELT, data quality, governance, and API integration.

- Open-Source and Enterprise Versions: Free and paid options based on business needs.

- Cloud-Native Support: Runs on AWS, Azure, and Google Cloud.

- Strong Data Quality Tools: AI-powered deduplication, anomaly detection, and validation.

- Advanced Security & Compliance: Designed for enterprise-level governance.

Pros

- Comprehensive feature set: More than just ELT, includes governance and quality tools.

- Scalable for enterprises: Works well with large, complex data environments.

- Open-source version available: Talend Open Studio is great for testing and development.

- Cloud-native and on-premise options: Provides deployment flexibility.

Cons

- Steep learning curve: Not beginner-friendly, requires some technical expertise.

- Performance issues if not optimized: Large workloads may need careful tuning.

- Can be expensive: Enterprise plans are costly compared to lightweight ELT tools.

Pricing

Talend offers a free open-source version (Talend Open Studio) with limited features. The enterprise version, Talend Data Fabric, is priced on a custom-quote basis and can get expensive for large-scale deployments.

What do People Think About Talend?

Final verdict

Talend is a powerhouse for data integration, especially for enterprises that need end-to-end data management and governance. It’s not the easiest tool to learn, but if you need a robust, scalable ELT solution with strong data quality features, it’s well worth considering. If you’re a small business or need something simpler, you might want to explore more user-friendly alternatives.

Discover top Talend alternatives in our latest blog.

10. Informatica

G2 Rating: 4.4 /5 (88)

Informatica is one of the most enterprise-grade data integration tools I’ve worked with. It’s a heavyweight in the ETL/ELT space, offering a comprehensive suite of solutions for data management, governance, and analytics. If you’re dealing with massive amounts of data across multiple environments like cloud, on-premise, or hybrid; Informatica provides the flexibility and scalability you need.

What sets Informatica apart is its AI-powered automation through CLAIRE, its metadata-driven intelligence engine. This makes processes like data discovery, transformation, and quality management more efficient. Unlike many ELT tools that focus primarily on moving data from source to destination, Informatica goes much deeper with features like data masking, governance, and security which is critical for industries dealing with sensitive data, such as finance and healthcare.

Key features

- AI-Driven Automation: CLAIRE AI optimizes data discovery and transformation.

- Comprehensive Data Management: Covers integration, governance, security, and quality.

- Supports Cloud & On-Premise: Works across hybrid environments.

- High Scalability: Handles massive enterprise workloads.

- Advanced Security Features: Includes data masking, data lineage, and compliance tools.

Pros

- Powerful enterprise-grade solution: Built for large-scale, complex data ecosystems.

- AI-powered automation: Helps optimize workflows and reduce manual effort.

- End-to-end data management: Goes beyond ELT with governance and security.

- Highly customizable: Supports diverse data needs with extensive configurations.

Cons

- Steep learning curve: Requires expertise to set up and manage effectively.

- High cost: Pricing is enterprise-focused and can be expensive for smaller teams.

- Complex UI: Not as intuitive as modern no-code ELT tools.

Pricing

Informatica offers custom pricing based on enterprise needs, with costs varying depending on usage, deployment type (cloud or on-premise), and required features. There’s no free version, but a free trial is available for some cloud-based solutions.

What do Poeple Think About Informatica?

Final verdict

Informatica is a powerhouse for enterprises that need robust, AI-driven data integration and governance. It’s not beginner-friendly and comes at a premium, but if you have the resources and need deep security and automation features, it’s one of the most comprehensive solutions out there. If you’re after something simpler, you might want to explore more user-friendly ELT tools.

What is the Difference Between ETL and ELT Tools?

ELT and ETL are two prevalent methods for data integration that mainly move data from a source system to a target database. However, they are suitable for unique data needs and follow a slightly different approach from each other.

ETL stands for Extract, Transform, Load, and is a traditional approach to data integration. In ETL, data is pulled from sources like database apps or third-party systems. Next, the extracted data is cleaned, collected, and refined into a fit-for-use format. Later, it is loaded into a data warehouse for further analysis. ETL processes work well with structured data and are better suited for small datasets. As the volume grows, the process becomes slow and resource-heavy.

ELT stands for Extract, Load, Transform, and is a more modern, cloud-first approach to data integration. Like ETL tools, data is extracted from various sources in the ELT approach, too. But instead of transforming, it’s first loaded into a data warehouse. The data is then transformed as and when needed. It is stored in the warehouse, enabling multiple transformations. ELT tools are best for large chunks of data and facilitate real-time analysis.

ELT tools tend to be quicker and more effective than traditional ETL setups, which often demand solid planning and infrastructure. With fewer systems to manage and built-in security features, ELT solutions offer a more scalable and cost-friendly path.

For a quick version, check out the comparison table below:

| Feature | ETL (Extract, Transform, Load) | ELT (Extract, Load, Transform) |

| Transformation Stage | Occurs before loading and on an external processing server | Occurs after loading inside the target system itself |

| Speed & Performance | Relatively slower—transforming before loading adds latency. | Faster—loads raw data first, then transforms using in-warehouse compute power. |

| Data Compatibility | Works well with structured data and fixed schemas. | Works well with structured, semi-structured, and unstructured data |

| Scalability | Harder to scale – infrastructure and processing limits. | Built for scale—uses cloud-native warehouses for parallel processing and agility. |

| Cost & Infrastructure | Requires more setup, infrastructure, and pre-planning—drives up cost and complexity. | Lower cost—fewer systems to manage, and transformation happens where the data lives. |

| Flexibility | Less flexible—schema must be defined up front; raw data isn’t stored. | More flexible—store raw data and transform on-demand or multiple times for different use cases. |

| Security & Compliance | Security features are often custom-built outside the data warehouse. | Built-in features like granular access control and MFA simplify compliance with privacy standards. |

What are the Key Features to Consider When Choosing an ELT tool?

Over the years, I’ve realized that picking the right ELT tool isn’t just about its features, it’s about finding a solution that fits your data stack, scales with your needs, and doesn’t break the bank. Here are some key factors you should keep in mind before making a decision:

- Connector Availability: Does the tool support all your data sources? Pre-built connectors save time, but if you work with niche applications, you’ll need custom connector support.

- Scalability & Performance: Can it handle increasing data volumes without slowing down? I always check for incremental loading, parallel processing, and real-time capabilities.

- Ease of Use: No one wants to spend hours troubleshooting a pipeline. A tool with an intuitive UI, clear documentation, and minimal setup effort makes life easier.

- Data Transformation: Some tools focus only on extraction and loading, leaving you to handle transformations separately. If you prefer in-pipeline transformations, check for SQL-based transformations or dbt integration.

- Cost & Pricing Model: Pricing can get tricky. Some tools charge per row, others per connector or events processed. Make sure you understand the cost structure and avoid unexpected fees.

- Security & Compliance: If you handle sensitive data, the tool must meet standards like SOC 2, GDPR, HIPAA and offer strong encryption and access control

- Monitoring & Alerting: I’ve learned the hard way that pipelines can break when you least expect it. Real-time alerts, logs, and dashboards help you catch and fix issues quickly.

- Customer Support & Community: No tool is perfect. When issues arise, a responsive support team, active community, and solid documentation make all the difference.

What are the benefits of using ELT Tools?

Single pipeline for all your data

Whether the data is structured, semi-structured, or unstructured, ELT tools are made to handle it effortlessly. From well-structured tables to messy logs, ELT platforms are built to manage varied data formats. With this versatility, businesses can analyze and integrate many data sources.

Instant insights

ELT tools accelerate analytics by loading raw data into your data lake, enabling transformations to occur post-load. Through this, pre-processing bottlenecks are reduced, and data becomes analytics-ready. With a cloud-based ELT platform, transformations can run continuously and in parallel, delivering near real-time insights and enabling faster, smarter business decisions.

Centralized data source for flexible analysis

ELT tools move raw data immediately into your cloud data warehouse like Snowflake, ensuring you get a centralized data source of truth – making analysis easy and flexible. Multiple teams can run their transformations on the same dataset, lessen redundant data movement, create custom views, and more without duplicating the data. The result? More flexible analytics through a single platform.

Simplified, No-Code Data Stack

With ELT tools, you can simplify your architecture, since there is no requirement for an external staging server or custom scripts. Moreover, with intuitive, built-in automation and no-code interfaces, ELT tools set up and manage data pipelines faster, and that too without writing a single line of code or struggling with a complicated data stack.

Enterprise-Grade Pipeline Management at Scale

Modern ELT tools offer businesses advanced pipeline management features such as REST APIs, CI/CD integration, and automated orchestration. With these solid built-in features, your teams can design, test, and deploy data pipelines in their engineering workflow. The best ELT tools assure reliable and scalable deployments, no matter whether your business manages a large number of pipelines or updates workflows regularly.

Recommended Watch

Final Thoughts

Choosing the right ELT tool is a critical decision that impacts how efficiently you move, transform, and analyze data. While there are plenty of options available, the best tool for you depends on your data needs, technical expertise, and budget. Some tools focus on simplicity, while others offer deep customization and flexibility.

If you’re looking for a scalable, no-code ELT platform that simplifies data movement without the hassle of maintenance, Hevo Data is a solid choice. With its pre-built connectors, real-time streaming, automated transformations, and enterprise-grade security, Hevo takes the complexity out of ELT so you can focus on insights instead of pipeline management.

Ready to streamline your data workflows? Try Hevo for free and experience effortless data integration today!

FAQ on ELT Tools

1. What is ETL and how does it work?

ETL stands for Extract, Transform, and Load. It is an approach to moving and preparing data for analysis. In an ETL process, data is first pulled from source systems and then cleaned, transformed, and structured using external servers before being loaded into a data warehouse.

The method works well for small, structured datasets with clearly defined schemas. However, as data volumes grow, ETL pipelines often become slower and harder to scale.

2. Which ELT tool is best for small businesses?

For small businesses or teams with limited technical resources, no-code tools like Hevo Data and Skyvia are excellent options. Both offer free plans or trials, easy setup without any coding, and sufficient connector support for the most common data sources. Hevo is particularly strong for real-time use cases, while Skyvia is ideal for straightforward cloud-to-cloud integrations.

3. Do I need a data warehouse to use an ELT tool?

Yes, ELT tools are designed to load raw data into a target data warehouse like Snowflake, BigQuery, or Redshift, where transformations happen afterward. If you don’t have a warehouse yet, most ELT platforms support multiple destination options and can guide you in selecting one that fits your data volume and budget. Some ELT tools also support loading into data lakes and databases, offering more flexibility.