Quick Takeaway

Quick TakeawayMoving data from Google Sheets to PostgreSQL can be done in several ways depending on your needs and technical expertise. The top four methods include:

- Method 1: Using Hevo Data – Leverage Hevo’s no-code data pipeline to automate and scale transfers from Google Sheets to PostgreSQL in real time, without writing code.

- Method 2: Using CSV – Export your Google Sheets as a CSV file and import it into PostgreSQL. This is simple and effective for one-time or small data transfers.

- Method 3: Using Google Apps Script – Automate transfers with custom Google Apps Script code. Ideal for teams comfortable with scripting who want scheduled or customized data loads.

- Method 4: Using Custom ETL Scripts – Build API-based ETL scripts to move data. This approach provides flexibility and control but requires strong coding and database expertise.

Does your organization store large amounts of data in Google Sheets? Would you prefer your data to be stored in a more secure data storage environment? If this applies to you, consider moving your data from Google Sheets to a secure relational database like Postgres. In this blog, I will present four methods to move data from Google Sheets to PostgreSQL, enabling you to choose the one that best suits your needs.

Table of Contents

Why You Should Move Data From Google Sheets to PostgreSQL?

- Building Web Applications: PostgreSQL can be used to build various web applications. It provides unique features, like an advanced indexing mechanism, JSON support, and full-text search, enabling developers to create responsive applications.

- Geospatial Applications: It is suitable for GIS applications as PostgreSQL supports geospatial data through extensions like PostGIS.

- Data Analytics: It can be used to perform complex querying and handle large volumes of data. It also supports OLAP(Online Analytical Processing) workflows.

- IoT (Internet of Things): The lightweight nature and flexibility make PostgreSQL suitable for IoT devices where data storage and management are crucial.

Top methods to load data from Google sheets to PostgreSQL easily

Method 1: Using Hevo to Connect Google Sheets to PostgreSQL [Recommnded Method]

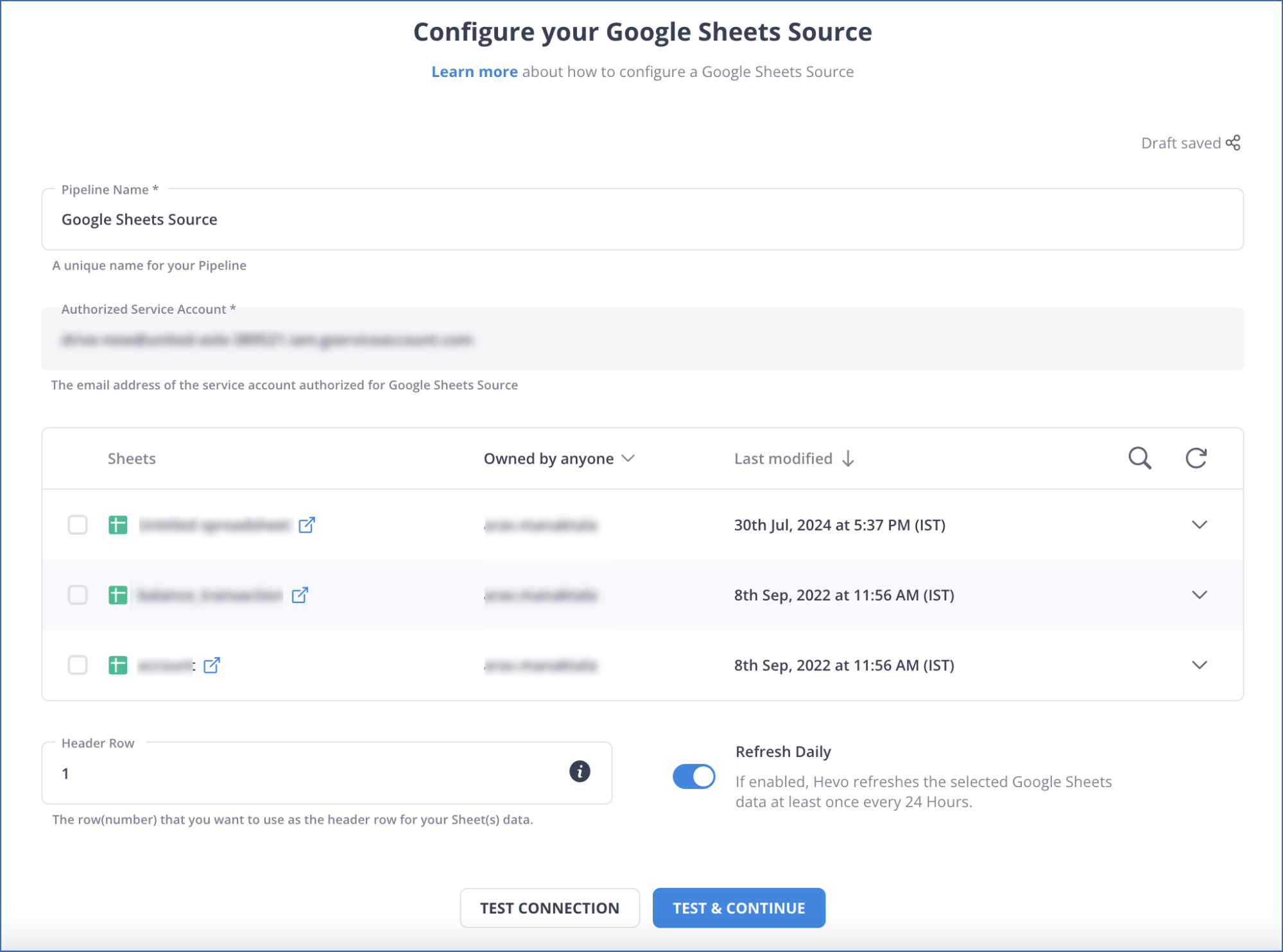

Step 1.1: Configure Google Sheets as Your Source

Log in with your Google account and allow access to your Sheets. Select the specific spreadsheet and worksheet you want to move. You can also define sync frequency—whether it’s a one-time import, scheduled at regular intervals, or continuous real-time sync.

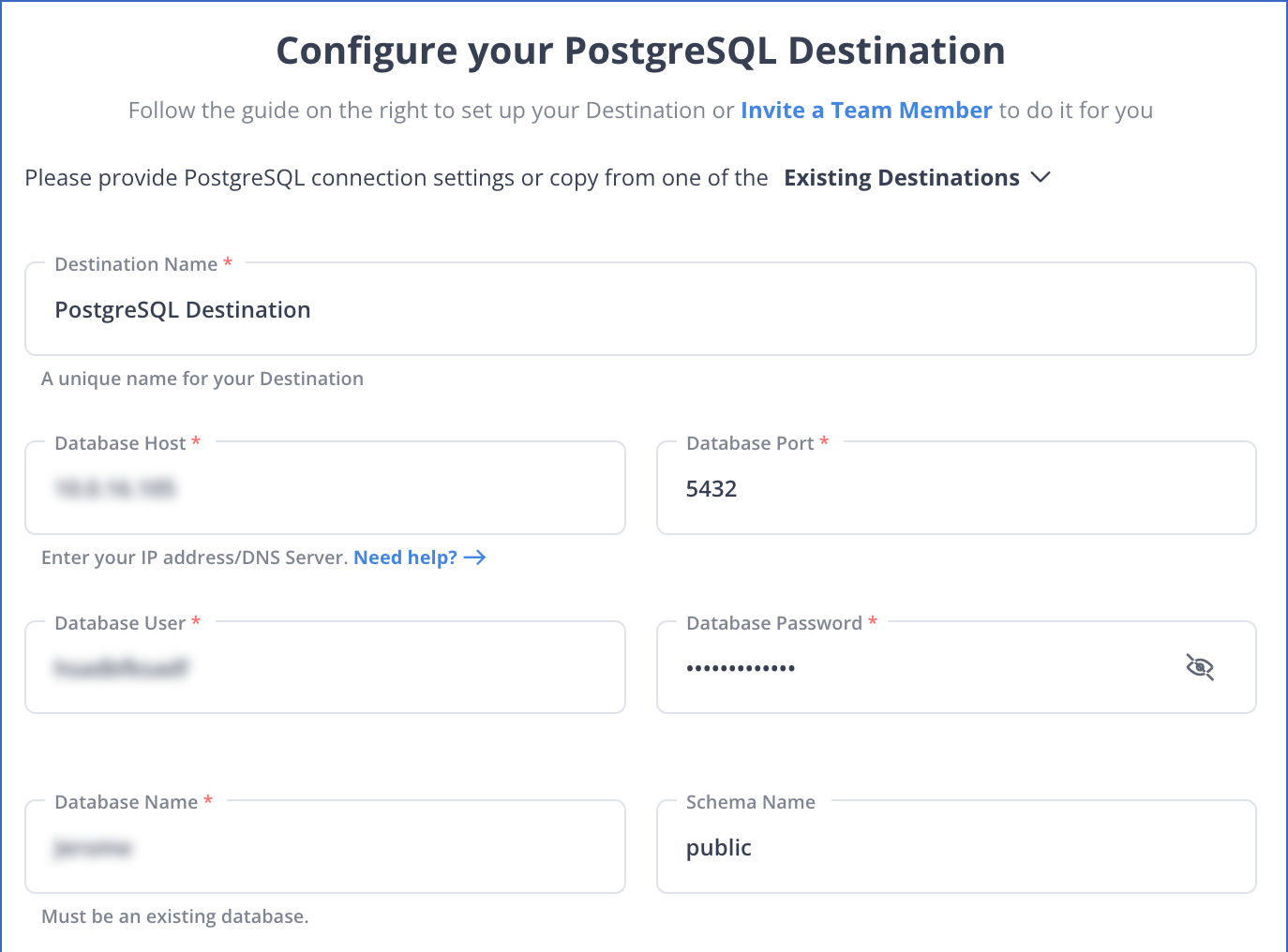

Step 1.2: Configure PostgreSQL as Your Destination

Provide your PostgreSQL connection details including host, port, database name, username, and password. Next, select the schema and table where your data should land. Hevo also supports auto-schema mapping, but you can override it to define custom mappings if needed.

Once the source and destination are configured, activate the pipeline. Hevo will handle data extraction, transformation, and loading automatically. You can monitor the migration in real-time and ensure that any updates in Google Sheets reflect instantly in PostgreSQL.

With these simple steps, you have seamlessly created a data pipeline to migrate your data from Google Sheets into PostgreSQL.

Ditch the manual process of writing long commands to connect your Google Sheets and PostgreSQL, and choose Hevo’s no-code platform to streamline your data migration.

With Hevo:

- Easily migrate different data types like CSV, JSON, etc.

- 150+ connectors like PostgreSQL and Google Sheets(including 60+ free sources).

- Eliminate the need for manual schema mapping with the auto-mapping feature.

Experience Hevo and see why 2000+ data professionals, including customers such as Thoughtspot, Postman, and many more, have rated us 4.4/5 on G2.

Move your Google Sheets Data for FreeMethod 2 : Google Sheets to PostgreSQL Using CSV Export

A simple way to transfer data from Google Sheets is by exporting it as a CSV file and then uploading it to your database. This method is useful for one-time transfers or when automated syncing isn’t required.

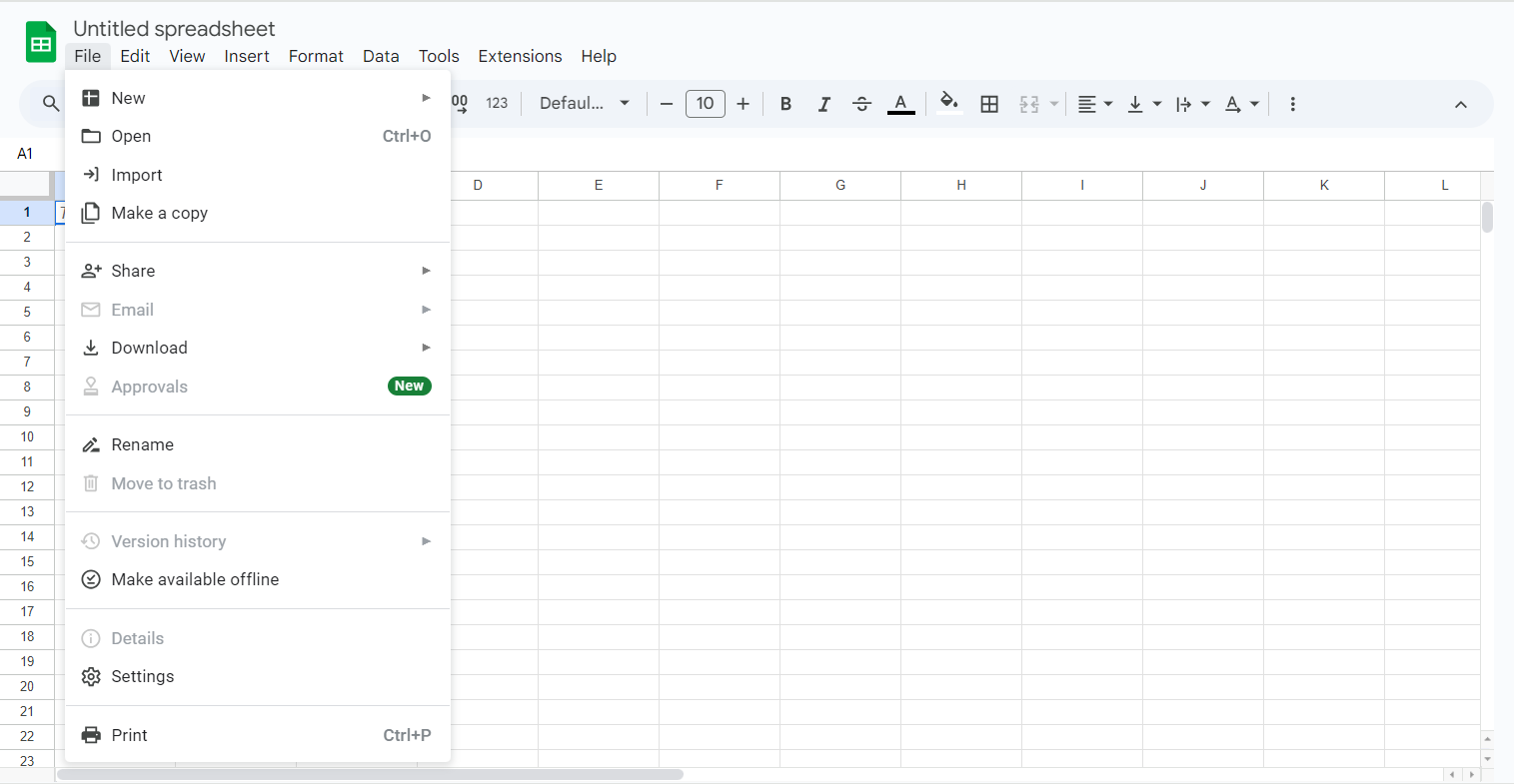

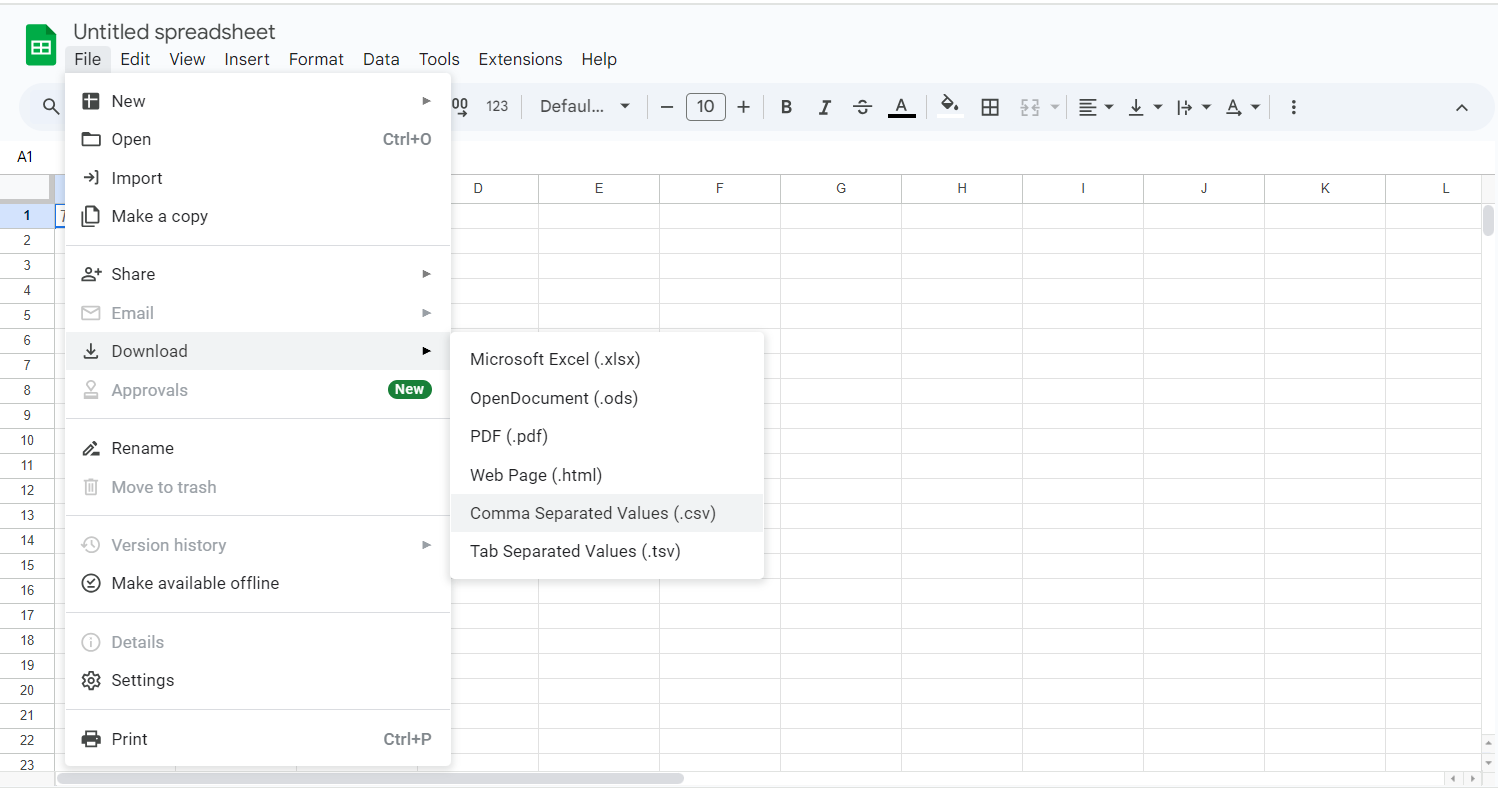

Step 2.1: Export Google Sheets Data as CSV

Open your Google Sheet and click File > Download > Comma Separated Values (.csv) to save the file locally.

Step 2.2: Prepare PostgreSQL for Upload

Ensure you have a table in PostgreSQL that matches the structure of your CSV file.

Step 2.3: Use the PostgreSQL COPY Command

Run the following command in PostgreSQL to upload the CSV data:

COPY your_table_name FROM '/path/to/your/file.csv' DELIMITER ',' CSV HEADER;Replace /path/to/your/file.csv with the actual path of your CSV file.

Step 2.4: Verify Data Upload

Check your PostgreSQL table using:

SELECT * FROM your_table_name;This confirms that the data has been successfully uploaded. This method is quick and efficient for occasional data transfers but requires manual effort each time new data needs to be imported.

Method 3 : Use Google Apps Script to Load Data from Sheets into PostgreSQL

Step 3.1: Open Apps Script

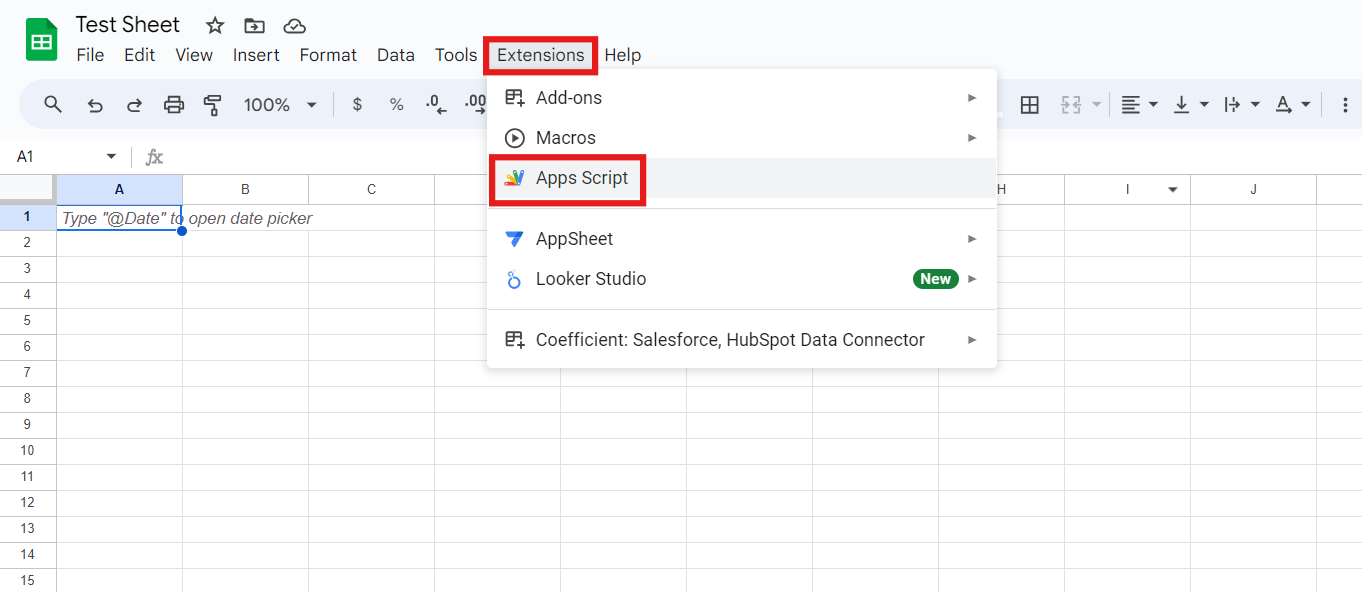

In your Google Sheet, go to Extensions > Apps Script.

Step 3.2: Write the Script

Use the following script to send data to your PostgreSQL database:

function sendDataToPostgreSQL() {

var sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("Sheet1");

var data = sheet.getDataRange().getValues();

var payload = JSON.stringify({ data: data });

var options = {

method: "post",

contentType: "application/json",

payload: payload

};

UrlFetchApp.fetch("YOUR_POSTGRESQL_API_ENDPOINT", options);

}Step 3.3: Deploy as a Web App

Click Deploy > New Deployment, set the type to “Web App,” and authorize permissions.

Step 3.4: Trigger the Script

Set a time-based trigger in Apps Script > Triggers to automate data transfer at regular intervals.

Method 4 : Google APIs + ETL Scripts for Google Sheets to PostgreSQL Integration

Step 4.1: Extract Data From Google Sheets

Google Sheets provides a REST API to fetch data. Use the GET command to extract data from a specific range:

GET https://sheets.googleapis.com/v4/spreadsheets/spreadsheetId/values/Sheet!A1:D5This will extract data from the specified column range of A1-D5 and generate the following output:

{"range": "Sheet1!A1:D5", "majorDimension": "ROWS", "values": [ ["Item", "Cost", "Stocked", "Ship Date"], ["Wheel", "$20.50", "4", "3/1/2016"], ["Door", "$15", "2", "3/15/2016"], ["Engine", "$100", "1", "30/20/2016"], ["Totals", "$135.5", "7", "3/20/2016"] ], }For further information on Google APIs, you can check the official Google Sheets API documentation.

This is how you can extract your data from Google Sheets using Google’s RESTful APIs.

Step 4.2: Transform Data Into the Correct Format

Before loading, clean the JSON file and ensure column names align with the PostgreSQL table structure. Each table consists of columns with a preset data type, such as integer or VARCHAR PostgreSQL, like every other SQL database, can handle various data types.

A popular technique for transferring Google Sheets data to PostgreSQL is to develop a schema that maps each API endpoint to a database. Each Google Sheets API endpoint response key should be mapped to a table column and converted to a Postgres-compatible data format.

Step 4.3: Load Data Into Postgresql

Create a staging table in PostgreSQL:

CREATE TABLE table_name (column_1 TYPE, column_2 TYPE, ...); Then, load the data using the COPY command:

COPY table_name FROM 'file_name.csv' HEADER CSV DELIMITER ','; For further information on using the COPY command, you can check the official PostgreSQL documentation.

Step 4.4: Update Data in PostgreSQL

Since Google Sheets does not track changes, regularly check for new data and update PostgreSQL. Use the UPDATE statement to modify existing records and manage duplicates efficiently.

By following these steps, you can integrate Google Sheets with PostgreSQL using ETL scripts and Google APIs.

Limitations of Migrating Data Using Google APIs

- Time-Consuming: You must write a lot of code manually if using this method. This takes up a lot of time and may not be very helpful in organizations that enforce strict deadlines.

- Requires Constant Maintenance: This method will return inaccurate data if there is a connectivity issue or issues with the Google Sheets API. It also requires constant monitoring to ensure that you have accurate data.

- Difficulty With Data Transformations: It is impossible to perform fast data transformations like currency conversions using this method.

- Difficulties With Real-Time Data: The data captured in this method is at a point in time. You have to write additional code and configure cron jobs to have real-time functionality.

When using the Google Sheets API, keep the following in mind:

- Rate Limitations: Depending upon the API version utilized, Google Sheets API has a price limit per project and person.

- Authentication: You can use OAuth or the app’s API key on Google Sheets.

- Paging and Dealing With Enormous Amounts of Data: Solutions like Google Sheets that deal with clickstream data generate a lot of data, such as web property events.

Conclusion

You have learned about four methods you can use to connect Google Sheets with PostgreSQL. The manual process requires configurations and is demanding, so you can check out Hevo, which will do all the hard work for you in a simple, intuitive process so that you can focus on what matters: the analysis of your data.

You can now transfer data from sources like Google Sheets to your target destination for free using Hevo!

Want to try Hevo? Sign up for a 14-day free trial and experience the feature-rich Hevo suite firsthand. Have a look at the unbeatable Hevo pricing, which will help you choose the right plan for you.

FAQs

1. How do I convert Google Sheets to a database?

To convert Google Sheets to a database, you can export the data to a CSV file from the menu in Google Sheets; by using a database management tool, you can create a table schema to match the structure of the imported data.

2. Can we use Google Sheets as a database?

No, Google Sheets cannot be used as a traditional database.

3. Can SQL pull data from Google Sheets?

SQL itself cannot pull data from Google Sheets using the web interface; however, you can indirectly retrieve data.

4. Can Google Sheets query a database?

Yes, Google Sheets can query a database using its built-in functionality or add-ons.

5. Can I connect Google Sheets directly to PostgreSQL without exporting CSV files?

Yes, you can. While CSV export is a quick method, you can also use Google Apps Script, APIs with ETL scripts, or no-code platforms like Hevo for direct integration.

6. What are the challenges of using Google APIs or custom ETL scripts?

They require constant maintenance, handling rate limits, managing authentication, and writing transformation logic manually, which can be time-consuming.

7. Why should I move my data from Google Sheets to PostgreSQL?

PostgreSQL offers advanced querying, scalability, geospatial support, and better security compared to Sheets, making it ideal for analytics, web apps, and enterprise data management.