Migrating data from PostgreSQL on Google Cloud SQL to SQL Server is a strategic move that addresses various business needs. Firstly, this migration enables seamless integration within the Microsoft ecosystem, facilitating efficient data sharing and management across platforms.

Secondly, it unlocks scalability, ensuring that your data infrastructure can effortlessly expand with growing demand. Beyond these benefits, this integration helps organizations with SQL Servers’ enhanced security features, disaster recovery mechanisms, and high availability. This transformation not only streamlines your data operations but will also help you perform in-depth analysis.

In this article, you’ll learn two popular ways to replicate data from Google Cloud PostgreSQL to SQL Server.

Table of Contents

Overview of PostgreSQL on Google Cloud SQL

PostgreSQL on Google Cloud SQL is a database service managed fully by Cloud SQL for PostgreSQL. It enables you to administer your PostgreSQL relational databases on the Google Cloud Platform.

Key Features of PostgreSQL on Google Cloud SQL

- Automation: GCP automates administrative database tasks such as storage management, backup or redundancy management, capacity management, or providing data access.

- Less Maintenance Cost: PostgreSQL on Google Cloud SQL automates most administrative tasks related to maintenance and significantly reduces your team’s time and resources, leading to lower overall costs.

- Security: GCP provides powerful security features, such as rest and transit encryption, identity and access management (IAM), and compliance certifications, to protect sensitive data.

Method 1: Using Hevo Data to Integrate PostgreSQL on Google Cloud SQL to SQL Server

Hevo Data, an Automated Data Pipeline, provides you with a hassle-free solution to connect GCP Postgre to SQL Server within minutes with an easy-to-use no-code interface. Hevo is fully managed and completely automates the process of loading data from PostgreSQL on Google Cloud SQL to SQL Server and enriching the data and transforming it into an analysis-ready form without having to write a single line of code.

Method 2: Using CSV file to Integrate Data from PostgreSQL on Google Cloud SQL to SQL Server

This method would be time-consuming and somewhat tedious to implement. Users will have to write custom codes to enable two processes, streaming data from PostgreSQL on Google Cloud SQL to SQL Server. This method is suitable for users with a technical background.

Get Started with Hevo for FreeOverview of SQL Server

SQL Server is a database system that stores structured, semi-structured, and unstructured data. It supports languages like Python and helps you extract data from different sources, sync it, and maintain consistency. The database provides controlled access to the data, making SQL Server more secure and ensuring regulatory compliance.

Key Features of SQL Server

Examine the following key features that have contributed to SQL Server’s huge popularity:

- The Engine of the Database: The Database Engine speeds up trade processing while also streamlining data storage and security.

- The Agent of the Server: It primarily serves as a project scheduler and can be triggered by any event or in response to a request.

- SQL Server Explorer: The Server Browser accepts queries and connects them to the appropriate SQL Server instance.

- Full-Text Search in SQL Server: Customers can perform a full-text search against Character records in SQL Tables, as the name implies.

- SSRS (SQL Server Reporting Services): Data reporting features and dynamic capacities are provided through Hadoop integration.

Method 1: Using Hevo Data to Integrate PostgreSQL on Google Cloud SQL to SQL Server

Step 1.1: Configure Google Cloud PostgreSQL as a Source

Step 1.2: Configure SQL Server as a Destination

That’s it! With these two simple steps, you’ve completed PostgreSQL on Google Cloud SQL to SQL Server migration process.

Key Features of Hevo Data

- Pre-built Connectors: With its 150+ pre-built connectors, you can set up data pipelines in just two steps without manual interventions. This feature makes Hevo accessible to a wide range of technical as well as non-technical users.

- Schema Mapping: Hevo automates schema mapping by analyzing changes in the source schema and updating them in SQL Server. This ensures a seamless solution for optimizing PostgreSQL on Google Cloud SQL pipeline without manual interventions.

- Customer Support: In case you feel stuck while creating a pipeline, the Hevo team is available to provide support, ensuring a smooth data integration experience. These features make Hevo the preferable choice, empowering worry-free, data-driven decision-making.

Method 2: Using CSV file to Integrate Data from PostgreSQL on Google Cloud SQL to SQL Server

To connect PostgreSQL on Google Cloud SQL to SQL Server using CSV files, you can follow the steps given below.

Prerequisites

- Access to Google Cloud SQL: You need access to the Google Cloud SQL instance containing the PostgreSQL database. Ensure that you have the permission to export data from the PostgreSQL database.

- SQL Server: Necessary permissions and access to the SQL Server instance where you want to import data.

Now, let’s walk through the detailed steps to migrate data.

Step 2.1: Export Data from Google Cloud SQL PostgreSQL

There are various ways to export data from Google Cloud SQL PostgreSQL into CSV files. Some of the common ways are using the COPY command, the Google Cloud Console, and the pg_dump command.

Using the COPY Command

- You can use the COPY TO command in PostgreSQL to copy data from a table to an external file, including CSV.

- Connect to your PostgreSQL database using CLI:

psql -h <google-sql-host> -U <username> -d <database-name>- Now, use the COPY command to export data from the Google Cloud SQL PostgreSQL table into a CSV file.

COPY table_name TO '/path_to_your_directory/file.csv' WITH CSV HEADER;Replace the necessary fields with your PostgreSQL database details and specify the name and path where you want to save the CSV file.

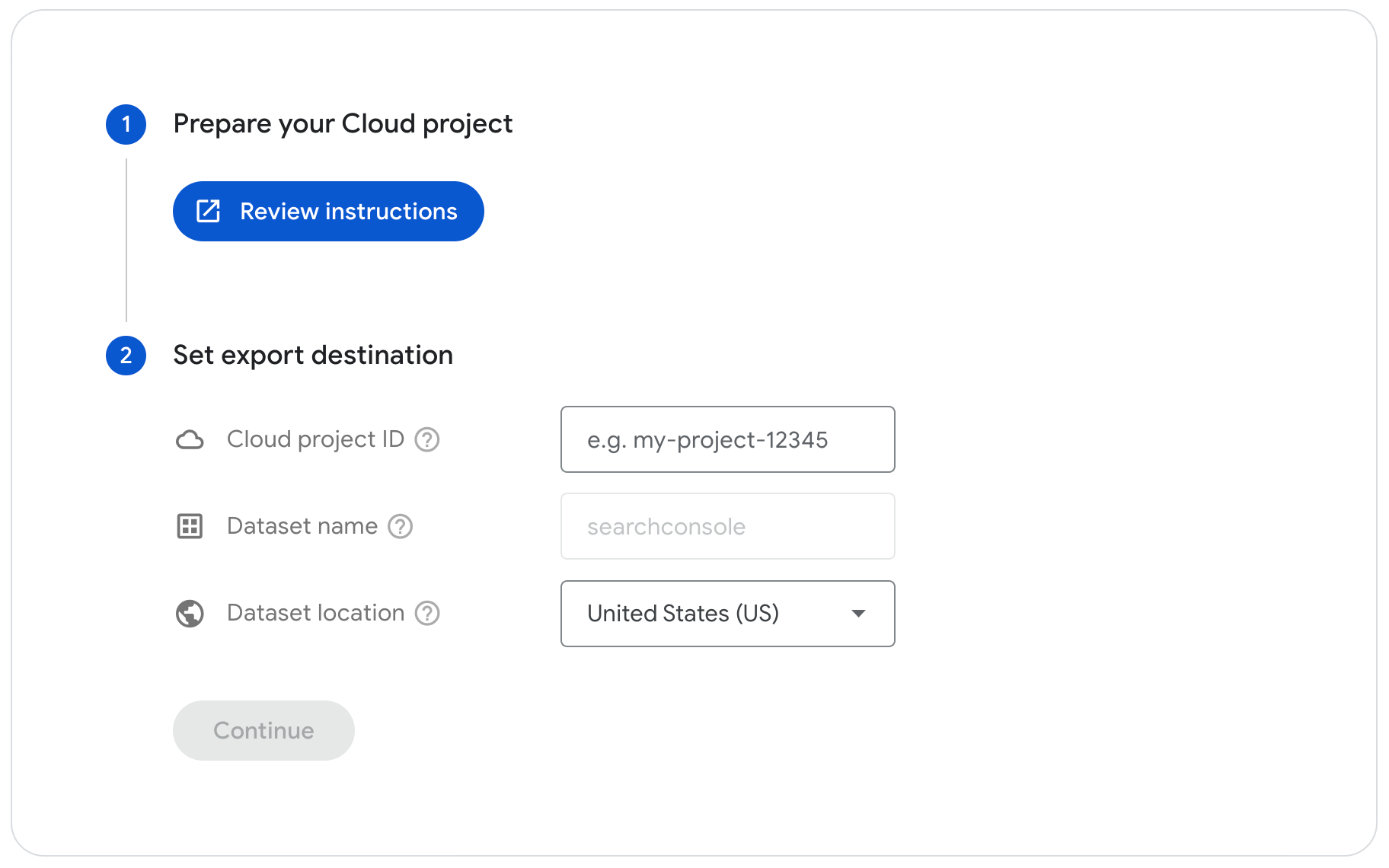

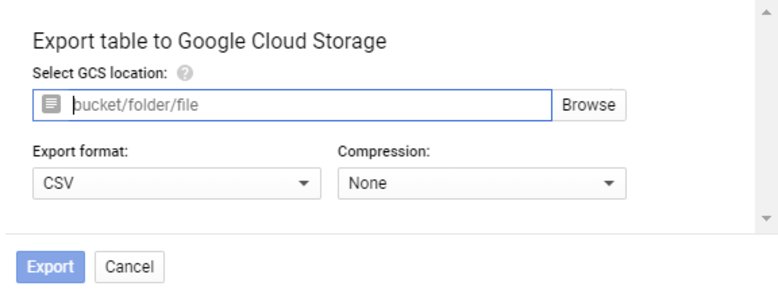

Using Google Cloud Console

- Open the Google Cloud Console and select Google Cloud project where your Google Cloud SQL instance is located.

- Select your PostgreSQL instance. In the Instance details, click on the name of the PostgreSQL database you want to export data from. Click on the Export tab.

- Choose Offload export to enable concurrent operations while the export is in progress.

- In the Cloud Storage export location section, you need to add the name of the bucket, folder, and file for your export.

- Alternatively, you can choose the Browse option to locate your bucket, folder, or file.

- If you select the Browse option, add a Cloud Storage bucket or folder to export in the Location section.

- Within the Name field, enter a path for the CSV file or choose an already existing file from the options available in the Location section.

- Click Select.

- Select CSV in the Format section.

- From the Database for export section, select the database name from the drop-down menu.

- Now, enter a SQL query to specify the PostgreSQL table you want to export. For example, your search query from a specific table would look like:

Select * from Company.employees;Where employees is the name of the table in the Company database.:

- Finally, click on the Export button to initiate the transfer process.

- Now, open the Cloud Storage section where your CSV files are stored and download them to your local machine.

Using the pg_dump Command

- You can use the pg_dump utility command to create an SQL dump or export specific data from the PostgreSQL table into a file. This file includes the schema, table definitions, and data in a custom PostgreSQL-specific format.

- Open a command prompt on your local machine or a server with access to your Google Cloud SQL instance.

pg_dump -h <google-sql-host> -U <username> -d <database-name> -t <table-name> --column-inserts --file=/path_to_your_directory/data.sqlReplace <google-sql-host> with the hostname or IP address of your Google Cloud SQL instance. <username>, <database-name>, and <table-name> with the respective PostgreSQL database details. Specify the path and name of the output file where the data will be saved.

- The downloaded file will be in SQL format. You need to convert it into a CSV file using a Bash or Python script.

By using any of these methods, you’ll have Google Cloud SQL PostgreSQL data in CSV file format on your local machine.

Step 2.2: Clean the CSV Files

After downloading CSV files, perform data mapping and transformation, considering the differences between PostgreSQL and SQL Server data types and schema structure. You also need to check duplicate values, remove missing or null values, and review accuracy.

Before uploading to SQL Server, ensure that the data is in the correct format.

Step 2.3: Import Data into SQL Server

You can use the SQL Server Management Studio (SSMS) or T-SQL command to import CSV file data into the SQL Server.

Below are the SSMS steps for importing data from a CSV file into SQL Server:

- Open the SSMS and connect to your SQL Server instance. Create one or use an existing target table in your SQL Server database to hold CSV file data.

- Select your database from the Object Explorer.

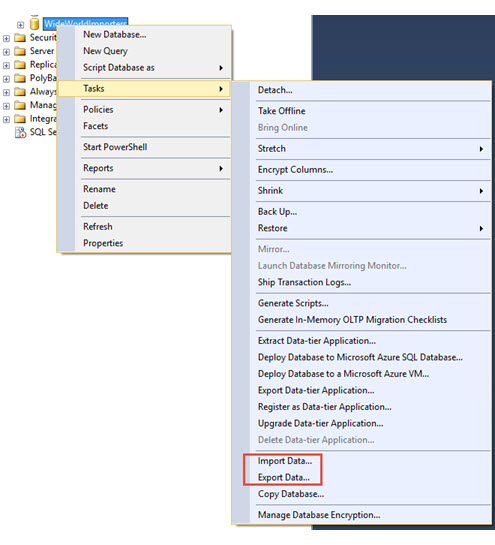

- Right-click on the target database > Tasks > Import Data. In the SQL Server Import and Export Wizard window, click Next to start the process.

- In the Data Source section, select the source of your data. Choose Flat File Source, as your data is in a CSV format. Click Browse to specify the path of your CSV file and configure options like delimiter and text qualifier as needed. Click Next.

- In the Destination section, select the SQL Server database and table where you want to import the data. Provide the necessary details and click Next.

- Check if the columns from the source match the target table from the Edit Mappings.

- In the Run Package section, review your settings and click on Next.

- Wait for SSMS to complete the import. You’ll see a summary of the transfer process. Finally, click on Finish.

These three steps complete the data migration process from PostgreSQL on Google Cloud SQL to SQL Server.

Advantages of Manually Connecting using CSV Files

- Minimal Dependencies: Working with CSV files often requires minimal software or infrastructure dependencies, making it accessible even in resource-constrained environments. This minimal dependency contributes to lower complexity and resource demands during data migration.

- One-Time Transfer: This approach is specifically suitable where you only need to perform an infrequent data transfer from Google Cloud SQL for PostgreSQL to SQL Server. It simplifies the process and reduces the complexity of building a robust data pipeline.

Drawbacks of Manually Connecting using CSV Files

- Manual Process: Exporting and importing CSV files is a time-consuming process as you need to repeat the same steps for each table. This can be a burdensome task for larger datasets.

- Lack of Incremental Updates: Using CSV files does not inherently support incremental updates. To keep data up-to-date in the SQL Server, you would need to repeat the import and export process for the entire dataset.

What can you Achieve from PostgreSQL on Google Cloud SQL to SQL Server Integration?

Centralizing data in SQL Server allows you to access comprehensive customer data and get answers to the following questions:

- Are there any specific geographic regions with exceptional sales performance?

- What are the trends that can help identify sales conversion opportunities?

- Who are the most prominent customers, and what are their frequency of purchases?

- What are the conversion rates for leads generated by the marketing team?

You can also learn more about:

- BigQuery to SQL Server

- SQL Server to Azure Synapse

- PostgreSQL to SQL Server

- Quickbooks to ms SQL Server

Summary

- Connecting PostgreSQL on Google Cloud SQL to SQL Server elevates your capability to analyze data and extract actionable insights quickly.

- The CSV-based approach is suitable for infrequent transfers from PostgreSQL on Google Cloud SQL to SQL Server.

- It can also lead to manual interventions and limited real-time updates. This can stop you from achieving timely insights as your SQL Server won’t be up-to-date.

- On the other hand, Hevo simplifies the data replication process from PostgreSQL on Google Cloud SQL to SQL Server with two straightforward steps.

- It offers 150+ in-built connectors, ensures real-time data synchronization, and automates schema mapping. These functionalities make Hevo an efficient solution for streamlining various data integration needs.

Sign up for a 14-day free trial and simplify your data integration process. Check out the pricing details to understand which plan fulfills all your business needs.

Frequently Asked Questions

1. How to transfer data from PostgreSQL to SQL Server?

You can transfer data from PostgreSQL to SQL Server using various methods like

database migration tools or by setting up a data pipeline. An easy way to automate this process is by using Hevo, which offers a no-code solution to move data between PostgreSQL and SQL Server work seamlessly without manual intervention.

2. How to connect to GCP Cloud SQL PostgreSQL?

To connect to GCP Cloud SQL PostgreSQL, use tools like psql or a SQL client with the connection string provided in the Cloud SQL instance. You’ll need details such as the instance IP, username, and password. Alternatively, you can set up a secure connection using the Cloud SQL Proxy.

3. What is the difference between GCP Cloud SQL and PostgreSQL?

GCP Cloud SQL is a managed database service provided by Google Cloud that

supports PostgreSQL, MySQL, and SQL Server. It offers automatic backups, scaling,

and built-in security, while standard PostgreSQL is a self-managed, open-source

database solution that requires manual configuration and maintenance.